kottke.org posts about robots

Sony’s AI division has designed a robot that can beat elite human players at table tennis. From the paper:

Evaluated in matches against elite and professional players under official competition rules, Ace achieved several victories and demonstrated consistent returns of high-speed, high-spin shots. These results highlight the potential of physical AI agents to perform complex, real-time interactive tasks, suggesting broader applications in domains requiring fast, precise human–robot interaction.

Ace is a fine name, but I might have gone with something like WALL-E Supreme instead. (Robbie Supreme?)

The Library of Congress recently discovered a copy of a “long-lost” film made in ~1897 by George Méliès called Gugusse and the Automaton (Gugusse et l’Automate), which “had not been seen by anyone in likely more than a century” and “was the first appearance on film of what might be called a robot”. It’s also one of the first science fiction films ever made.

You can watch a digitized copy of the whole film here (it’s only 45 seconds long):

And here’s the story of how the film was discovered.

Equally delighted was Bill McFarland, the donor who had driven the box of films from his home in Grand Rapids, Michigan, to the Library’s National Audio-Visual Conservation Center in Culpeper, Virginia, to have the cache evaluated.

His great-grandfather, William Delisle Frisbee, had been a potato farmer and schoolteacher in western Pennsylvania by day, but by night he was a traveling showman. He drove his horse and buggy from town to town to dazzle the locals with a projector and some of the world’s first moving pictures.

He set up shop in a local schoolroom, church, lodge or civic auditorium and showed magic lantern slides and short films with music from a newfangled phonograph. It was shocking.

“They must have been thrilled,” McFarland said. “They must have been out of their minds to see this motion picture and to hear the Edison phonograph.”

A group of three students at Purdue University have shattered the world record for the fastest Rubik’s Cube solve by robot — their bot solved the cube in just 0.103 seconds (103 milliseconds). As a comparison, the former record was 305 milliseconds and “a human blink takes about 200 to 300 milliseconds”. As one of the students said, “So, before you even realize it’s moving, we’ve solved it.”

The world record for a human solve is 3.13 seconds by Max Park in 2023 (not anymore, see comments. (via we’re here

Mark Rober built a robot that solves jigsaw puzzles and pitted it against Tammy McLeod, one of the world’s faster human solvers. The design and build process is fascinating, especially the fine-tuning enabling the robot to “wiggle” each piece into its place.

When we first tried to assemble the puzzle, almost none of the pieces fit together perfectly. This was before we had corrected the errors in the computer vision code as described earlier.

However even after we improved the computer vision code, some small errors remained. Many pieces would fit together perfectly, and then you would see one that was ever so slightly out of place, and that could ruin the alignment for the rest of the puzzle if left unresolved.

To solve this, we took inspiration from humans. If you try to place a puzzle piece with your hands, you’ll find that often you need to wiggle the piece around to get it to snap into place. So we programmed the robot to do the same thing.

Also, Kristen Bell shows up?

Ok, this is super freaky: this is a regular analog piano being played by a computer-controlled mechanical machine and it sounds like a person speaking. If you hadn’t seen this before, (it’s from 2009) take a listen:

Deus Cantando is the work of artist Peter Ablinger. He recorded a German school student reciting some text and then composed a tune for the mechanical player to sound like the recitation. I cannot improve upon Jason Noble’s description of the work:

This is not digital manipulation, nor a digitally programmed piano like a Disklavier. This is a normal, acoustic piano, any old piano. The mechanism performing it consists of 88 electronically controlled, mechanical “fingers,” synchronized with superhuman speed and accuracy to replicate the spectral content of a child’s voice. Watching the above-linked video, it may seem that the speech is completely intelligible, but this is partially an illusion. The visual prompt of the words on the screen are an essential cue: take them away, and it becomes much harder to understand the words. But it is still remarkable that the auditory system is able to group discrete notes from a piano into such a close approximation of a continuous human voice, and that Ablinger was able to do this so convincingly using a conventional instrument (albeit, played robotically).

This is so cool, I can’t believe I’d never seen it before. (via @roberthodgin)

It’s a trip watching how fast CyberRunner can run a marble through this wooden labyrinth maze.

Labyrinth and its many variants generally consist of a box topped with a flat wooden plane that tilts across an x and y axis using external control knobs. Atop the board is a maze featuring numerous gaps. The goal is to move a marble or a metal ball from start to finish without it falling into one of those holes. It can be a… frustrating game, to say the least. But with ample practice and patience, players can generally learn to steady their controls enough to steer their marble through to safety in a relatively short timespan.

CyberRunner, in contrast, reportedly mastered the dexterity required to complete the game in barely 5 hours. Not only that, but researchers claim it can now complete the maze in just under 14.5 seconds — over 6 percent faster than the existing human record.

CyberRunner was capable of solving the maze even faster, but researchers had to stop it from taking shortcuts it found in the maze. (via clive thompson)

Oh this is so nerdy and great: Veritasium introduces us to Micromouse, a maze-solving competition in which robotic mice compete to see which one is the fastest through a maze. The competitions have been held since the late 70s and today’s mice are marvels of engineering and software, the result of decades of small improvements alongside bigger jumps in performance.

I love stuff like this because the narrow scope (single vehicle, standard maze), easily understood constraints, and timed runs, combined with Veritasium’s excellent presentation, makes it really easy to understand how innovation works. The cars got faster, smaller, and learned to corner better, but those improvements created new challenges which needed other solutions to overcome to bring the times down even more. So cool.

This is almost beyond meditative: watch Reuben Margolin’s robotic caterpillar very slowly scale a woodpile.

The woodpile is not as random as it looks, but follows a predetermined polynomial spline within certain bounds of curvature. It is made of scrap wood and took about week to make. The caterpillar took several months, although a lot of that time was spent learning about servo motors, micro-controllers, Terminal and Python, and learning how to use an oscilloscope to trouble shoot the square wave signal that carries the angular information.

(via clive thompson)

It’s always fun1 to observe robots moving in surprisingly human ways, and this slack-linin’, skateboardin’ drone robot named Leo hopping off a ledge like a slow-mo Trinity in the Matrix certainly qualifies. And it walks all jaunty and sexy, like John Travolta in Saturday Night Fever?

In an artwork commissioned by the Guggenheim Museum called Can’t Help Myself, Sun Yuan and Peng Yu designed a robotic arm that is designed to keep a blood-colored liquid from straying too far away.

Placed behind clear acrylic walls, their robot has one specific duty, to contain a viscous, deep-red liquid within a predetermined area. When the sensors detect that the fluid has strayed too far, the arm frenetically shovels it back into place, leaving smudges on the ground and splashes on the surrounding walls.

Sounds a bit like everyone trying to do everything these days. This artwork has been popular on TikTok because people are empathizing that the machine is slowing down.

“It looks frustrated with itself, like it really wants to be finally done,” one comment with over 350,000 likes reads. “It looks so tired and unmotivated,” another said.

Finch is a movie starring Tom Hanks, whose character befriends a dog in post-apocalyptic America and then builds a robot to protect the dog. It’s like Short Circuit meets I Am Legend meets Turner and Hooch meets Castaway meets Terminator 2. The only reason I am telling you about this preposterous-sounding entertainment product is that David Ehrlich (who is responsible for the epic movie recaps I post every year) wrote a mostly favorable review of it. The star of the show, says Ehrlich, is Jeff, the dog’s robot bodyguard:

Dewey sets the tone as the first of Finch’s manufactured friends. An articulating arm that’s attached to a metal cube on wheels, the prototype is lovable despite being only lightly anthropomorphized, and the decision to cast him as a 100-percent practical animatronic makes it that much easier for your eyes to accept that Jeff is just as real (Jones’ on-set motion-capture work and top-notch CGI help to complete the illusion). From the moment Finch powers him up, there isn’t a doubt in your mind. In fact, Jeff is so tactile and endearing that a more adorable design might have risked a kind of overkill; essentially an oblong, gourd-like orange cushion affixed with two protruding camera eyes and squished on top of a giant chassis of exposed titanium joints, Finch’s magnum opus doesn’t seem like the solution to all his problems so much as a robot Cousin Greg who’s been programmed with Asimov’s Three Laws plus a prime directive to “protect dog above all else.” He can only be loved for his potential.

It’s streaming on Apple+ starting this Friday. I might….watch it?

Artist & engineer JBV has built a robot artist called Flingbot that works by throwing paint at a canvas according to a number of randomized parameters, e.g. fling strength, scoop shape, paint color, and throwing angles:

The next parameters are the starting and ending fling angles. Randomly chosen by the code, the trajectory and point it hits the canvas is variable creating another factor of uniqueness. This is all controlled by a servo under the base of the catapult, which also happens to be the motor that allows Flingbot to position itself under the paint reservoirs.

This means that every painting Flingbot creates is effectively unique.

All in all, accounting for all the different parameters, there are almost 3 trillion paintings that Flingbot can make. The number is likely even higher than this because there are even more variables to consider that are out of Flingbots control. These include the consistency of the paint, the angle of the canvas, the temperature in the room…the list goes on. It’s safe to say that each one of Flingbot’s painting is truly one of a kind.

(via the morning news)

HERMITS are tiny experimental robots developed by researchers at MIT’s Media Lab that can move between different “shells” to gain new capabilities.

Inspired by hermit crabs, we designed a modular system for table-top wheeled robots to dock to passive attachment modules, defined as “mechanical shells.” Different types of mechanical shells can uniquely extend and convert the motion of robots with embedded mechanisms, so that, as a whole architecture, the system can offer a variety of interactive functionality by self-reconfiguration.

(via fast company)

Well. The robots sure are getting good at moving around — running, jumping, doing flips, casually vaulting over railings like an eighth grader trying to impress friends. It is eerie and weird and uncanny and all other such adjectives watching these machines smoothly caper around like humans. Even in the blooper reel they seem really toddler-esque.

A Brooklyn company called Pliant Energy Systems has developed a prototype of an amphibious robot that can swim, skate, slither, and crawl through water and over all different kinds of terrain. The secret is an undulating propulsion system that can modified on the fly to adapt to different conditions.

Velox can use several modes of locomotion found in the animal kingdom using just one pair of “fins”. These fins are best described as four-dimensional objects with a hyperbolic geometry that allows the robot to swim like a ray, crawl like a millipede, jet like a squid, and slide like a snake.

A craft equipped with this system has unprecedented freedom to travel through a range of environments in a single mission. As an underwater vehicle, the robot’s ability to instantly reverse direction and do quick turns make it ideal for task such as coral reef inspection or dragon fish hunting where a craft must rapidly maneuver to look around and between objects.

(thx, dunstan)

Boston Dynamics programmed their Atlas robot to do a gymnastics routine.

I lost it when it did that little jump split at about 13 seconds in. That looked seriously human in a deeply unsettling way.

Mark Rober built a rock skipping robot and by adjusting a bunch of different parameters, he figured out the best way to skip rocks. And no, I completely did not get out a notepad and start jotting down notes while watching this video and there’s no way I’m heading out to one of my favorite rock skipping places tomorrow morning to try out some new techniques. Nope. Not gonna happen. (thx, tom)

A robot built by a pair of engineering students at MIT can solve a Rubik’s Cube in 0.38 seconds (which happens to be 19 minutes and 59.22 seconds shorter than my fastest time):

0.38 seconds is over in an almost literal flash, so the video helpfully shows this feat at 0.25x speed and 0.03x speed. I bet when they were testing this, they witness some spectacular cube explosions. (via @tedgioia)

Buying vintage and collectible sneakers online has become so complicated that a secondary industry has sprung up. Programmers create bots to outbid humans at auction. Initially, these were used just to snap up shoes to be sold at a markup on secondary markets. Eventually, though, enterprising botmakers realized they could avoid having to deal with shoes altogether by selling their bots to thirsty sneakerheads directly. At Complex, Tommie Battle breaks it down:

It’s now seen as a must that you need a bot. Copping “manually” is a risky endeavor—a misstep in entering credit card info or your address could mean that the item that was once on its way to being delivered to your door is now swept from under your virtual feet. That very sequence has happened to so many customers at this point that it’s now a part of the release date experience, and there is seemingly no light at the end of the tunnel.

The exclusivity will always be there in the world of streetwear, and it’s quite possible that the exclusivity is what drives brands to stay the course in regards to how they handle not only the availability, but the probability of purchasing their product. After all, the hype doesn’t follow every sneaker. The issue remains that the playing field must be even, especially in the digital realm. The old phrase is that the customer is always right, but what happens if the customer is a mindless bot?

The main question I have is this: what other economies have been disrupted by bots? Presumably, the rest of the collectors’ markets haven’t been untouched by this. The fundamental technologies and principles are basically the same. Entertainment, too, in the form of tickets to events, anything else sold by auction or to the first bidder. But how deep does it go? How far has the rot spread?

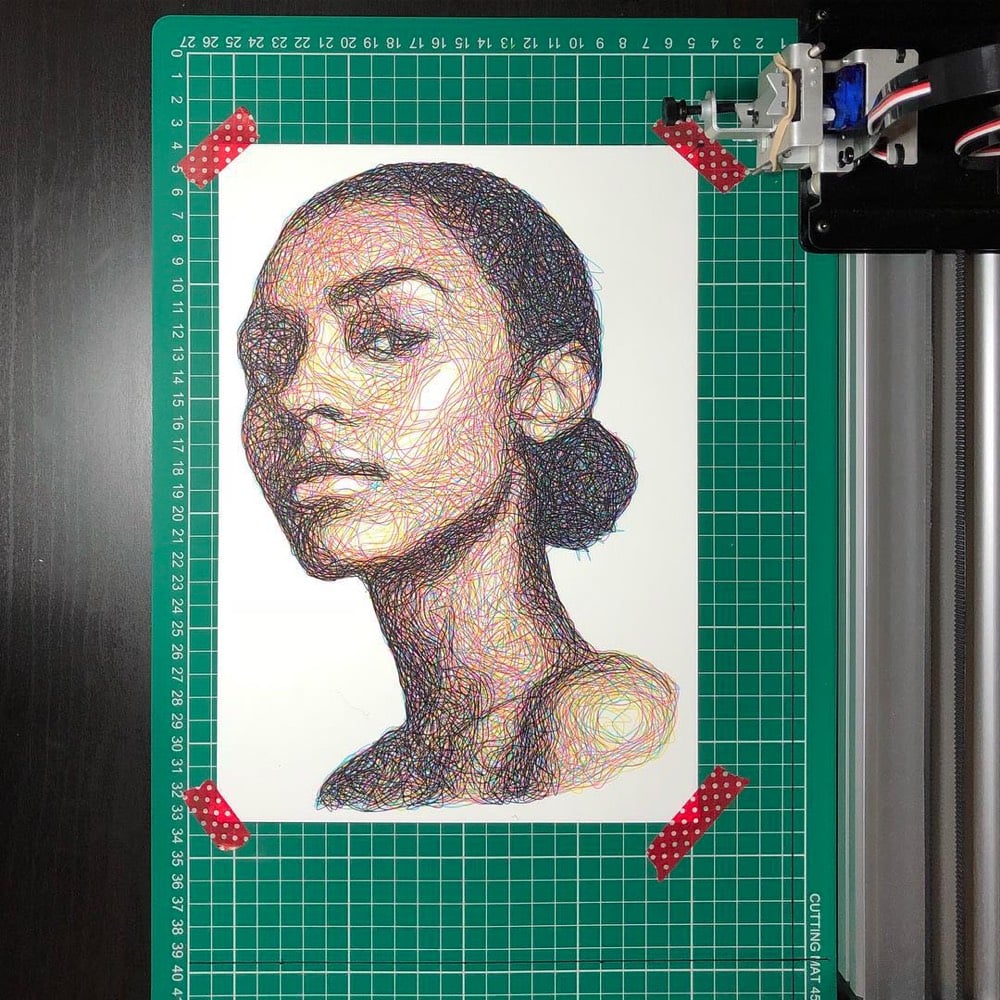

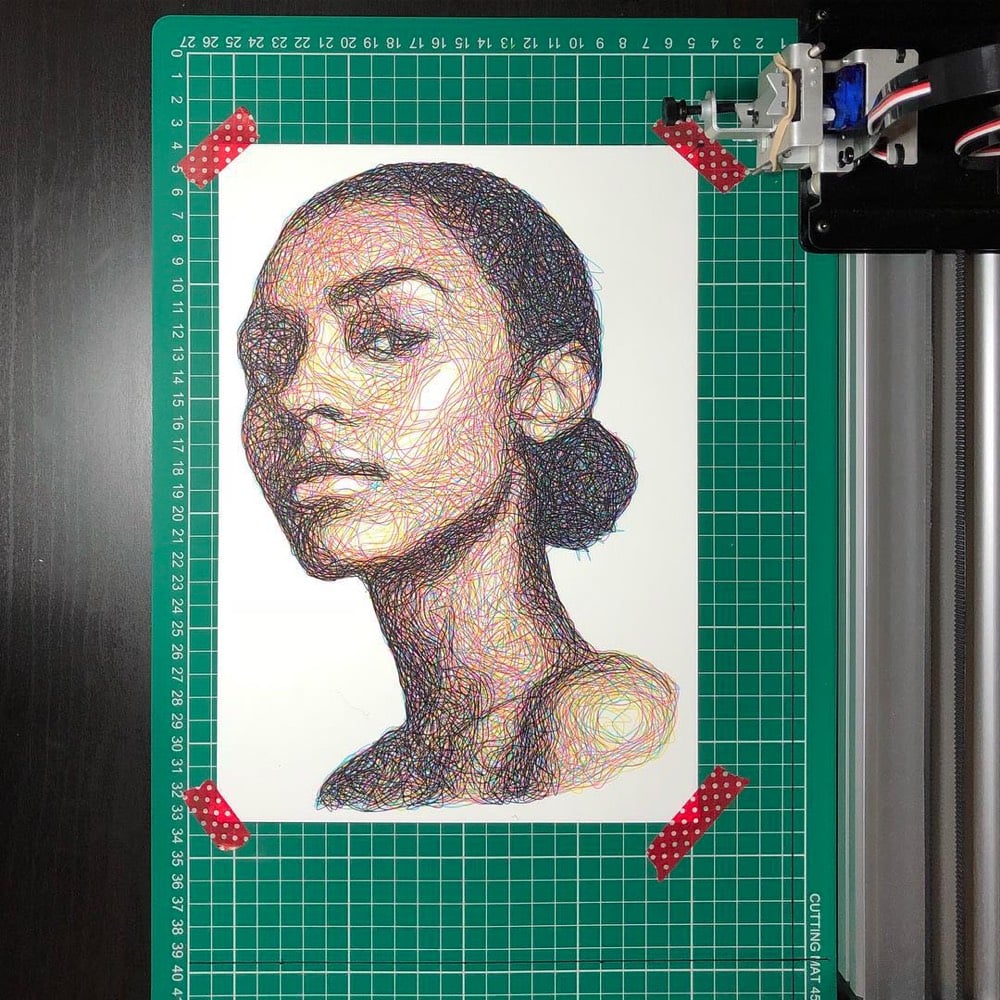

Samer Dabra uses a drawing machine called the AxiDraw and a custom program to generate Impressionistic line drawings of people. The machine builds the portraits using four single lines drawn in the four CMYK colors, one on top of another, with minimal tweaking from Dabra. Rion Nakaya of The Kid Should See This edited together a video of the machine creating drawings.

There is something more than a little Vincent van Gogh & Georges Seurat about these. You can see the results on Instagram.

Hot off the heels of their video showing a humanoid robot casually doing parkour, Boston Dynamics has made a clip of their robot dog doing a hip hop dance routine to Uptown Funk.

While the robot in the parkour video looked distinctly un-human at times, I have to say that this dog robot is a much better and more fluid dancer than I expected — it’s got better moves than most of the people I’ve seen dancing at Midwestern weddings. The robot does what looks like the running man and then twerks while mugging for the camera. I don’t know what level of cultural appropriation this is and Boston Dynamics is probably just doing this to distract from the whole Terminator narrative, but was anyone else the tiniest bit jealous of and turned on by (and then deeply ashamed of those feelings) the robot’s moves?

I’ve watched this a dozen times now and it looks fake and I can’t figure out quite why. Is it the uncanny valley at work? This thing moves mostly like a human would…but not entirely. Look at the robot when it hops over the log. It appears too effortless…not enough recoil on the landing or something. And going up the steps, it looks like it’s levitating, like how CG characters sometimes move, unaffected by actual contact with surfaces. See also the robot’s casual gymnastics.

For those of us who have never quite gotten the hang of solving the popular puzzle, some wonderful genius has constructed a self-solving Rubik’s Cube. There don’t seem to be any details available about how it works, but based on the videos, it seems likely the electronics inside record the moves when the Cube is mixed up and then simply performs them in reverse. (via fairly interesting)

Update: If you read the comments at Metafilter, it appears my speculation about how the Cube works is wrong…it appears to actually be solving itself, not just reversing moves.

Add finding Waldo to the long list of things that machines can do better than humans. Creative agency Redpepper built a program that uses Google’s drag-and-drop machine learning service to find the eponymous character in the Where’s Waldo? series of books. After the AI finds a promising Waldo candidate, a robotic arm points to it on the page.

While only a prototype, the fastest There’s Waldo has pointed out a match has been 4.45 seconds which is better than most 5 year olds.

I know Skynet references are a little passé these days, but the plot of Terminator 2 is basically an intelligent machine playing Where’s Waldo I Want to Kill Him. We’re getting there!

Update: Prior art: Hey Waldo, HereIsWally, There’s Waldo!

Fittingly using only off-the-shelf components, a team of researchers in Singapore built a robot capable of assembling a Stefan chair from Ikea (minus actually bolting it together). The assembly time was around 20 minutes, about 5-10 minutes slower than a typical human would take.

It took a few attempts to get it right. Early on, the robots dropped wooden pins, let go of parts too soon, and performed moves that did more to dismantle the chair than assemble it. Some moves required a part to be held by both robots at the same time, and since industrial robots are far stronger than Ikea furniture, a number of mistakes ended badly. “We bought four chair kits and broke a few of them,” said Pham.

Once the robot can fully assemble Ikea furniture in near-human timeframes, I propose we stop all robotics and AI research. When humanity no longer has to struggle with Ikea assembly, we can live like Scandinavian kings and not have to worry about AI murderbots killing us all (before they get bored, of course).

Researchers at Harvard have developed a milliDelta robot that is very precise and moves very quickly. The video shows the robot moving so quickly (making circles up to 75 times per second) that the motion blurs, like Neo at the end of the first Matrix movie. From the description of a second video showing the milliDelta bot:

Our design is powered by three independently controlled piezoelectric bending actuators. At 15mm x 15mm x 20mm, it has a payload capacity of ~3x its mass. It can operate with precision down to ~5um, at frequencies up to 75Hz, and experience accelerations of ~22g.

This robot would kill at Track & Field on the NES.

We’ve seen autonomous swarming killer robots before (in Black Mirror and other places), but this video presents a particularly plausible scenario for their development: a venture-backed company led by a Travis Kalanick-style CEO combining tiny drones invented by a playful technologist, AI-powered facial recognition, and miniature explosives to make tiny killbots that will no doubt disrupt the world while creating a ton of shareholder value.

The video is produced by a group that wants to ban autonomous weapons, and I think these things will probably be banned in some form, possibly by banning drones and some kinds of consumer electronics altogether. What struck me most while watching this is that if guns were a new invention, they would most likely be banned in the US, just like lawn darts or explosive devices. A hand-held machine that can kill a person 1000 feet away and hides easily in a pocket? That sounds like a dangerous, litigious nightmare, just the sort of thing the US routinely regulates against for the safety of its people.

So, the jumping from box to box seemed cool. Hey, robot parkour! It seemed awfully agile for something that looks like it weighs quite a bit, but ok. But the casual gymnastics about 20 seconds in broke my brain. Holy. Crap.

Robots fighting each other in arenas is a popular sporting event; see Robot Wars. In Japan, such competitions often take place in small sumo rings and the robots need to move incredibly fast to achieve victory. Robert McGregor compiled some of the fastest and most vicious footage in this video…and none of the footage is sped up in any way. Note the protective leg pads worn by the referee in many of the clips…there must have been an “incident”. (via @domyates)

This new video by Kurzgesagt examines automation in the past (“big stupid machines doing repetitive work in factories”) and argues that automation in the information age is fundamentally different. In a nutshell,1 whereas past automation resulted in higher productivity and created new and better jobs for a growing population, automation in the future will happen at a much quicker pace, outpacing the creation of new types of jobs for humans.

Their two main sources for the video are Martin Ford’s Rise of the Robots and The Second Machine Age by Erik Brynjolfsson and Andrew McAfee.

Older posts

Socials & More