kottke.org posts about brain

Sleep is one of those things we still don’t completely understand and new discoveries are still being made. This research is quite interesting, as it brings some insights into how sleep cleans toxins from the brain.

“First you would see this electrical wave where all the neurons would go quiet,” says Lewis. Because the neurons had all momentarily stopped firing, they didn’t need as much oxygen. That meant less blood would flow to the brain. But Lewis’s team also observed that cerebrospinal fluid would then rush in, filling in the space left behind.

The brain’s electrical activity is moving fluid in the brain, clearing out byproducts like beta amyloid, which can contribute to Alzheimer’s disease.

So brain blood levels don’t drop enough to allow substantial waves of cerebrospinal fluid to circulate around the brain and clear out all the metabolic byproducts that accumulate, like beta amyloid.

Independent of the science, you have to wonder how people manage to sleep in an MRI machine!

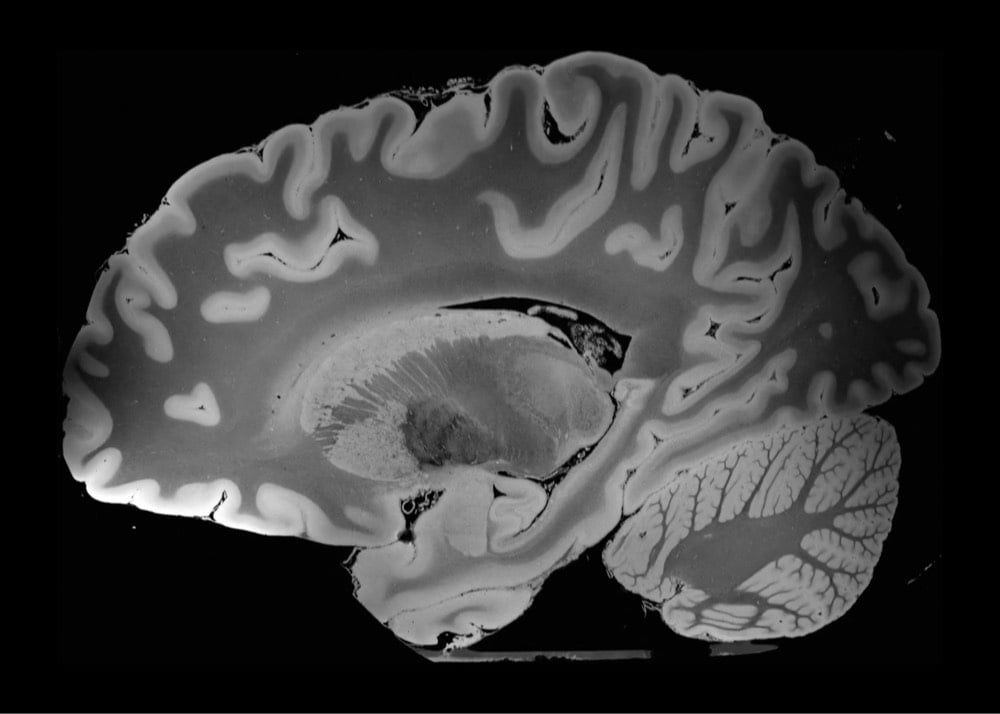

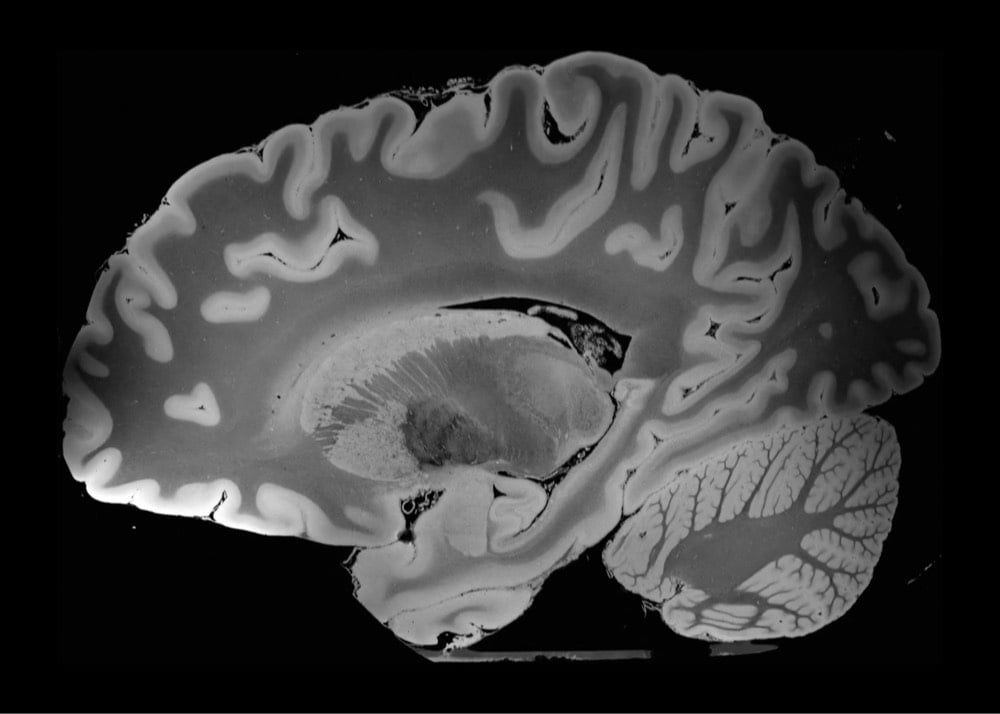

A team of researchers at the Laboratory for NeuroImaging of Coma and Consciousness have done an ultra-high resolution MRI scan of a human brain. The scan took 100 hours to complete and can distinguish objects as small as 0.1 millimeters across.

“We haven’t seen an entire brain like this,” says electrical engineer Priti Balchandani of the Icahn School of Medicine at Mount Sinai in New York City, who was not involved in the study. “It’s definitely unprecedented.”

The scan shows brain structures such as the amygdala in vivid detail, a picture that might lead to a deeper understanding of how subtle changes in anatomy could relate to disorders such as post-traumatic stress disorder.

This video above shows the scanned slices of the entire brain from side to side.

You can view/download the entire dataset of images here.

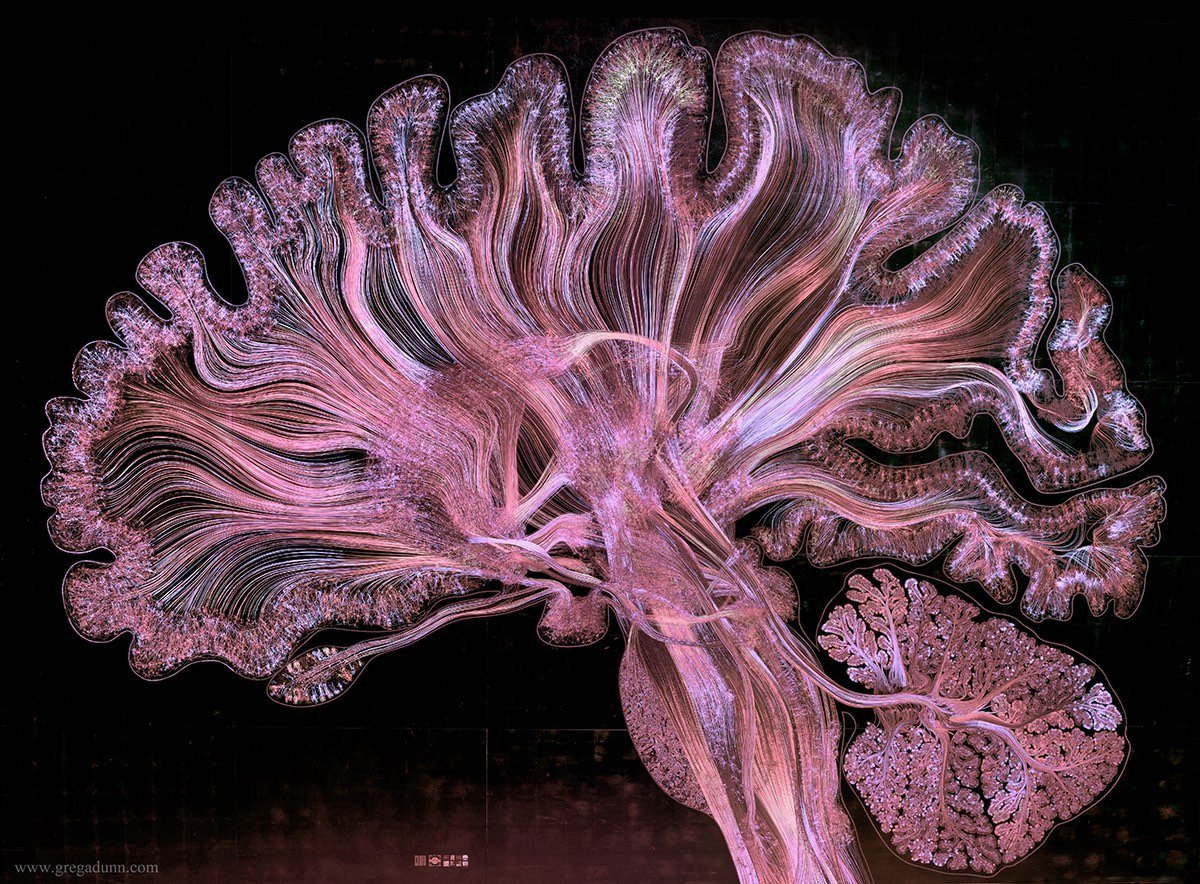

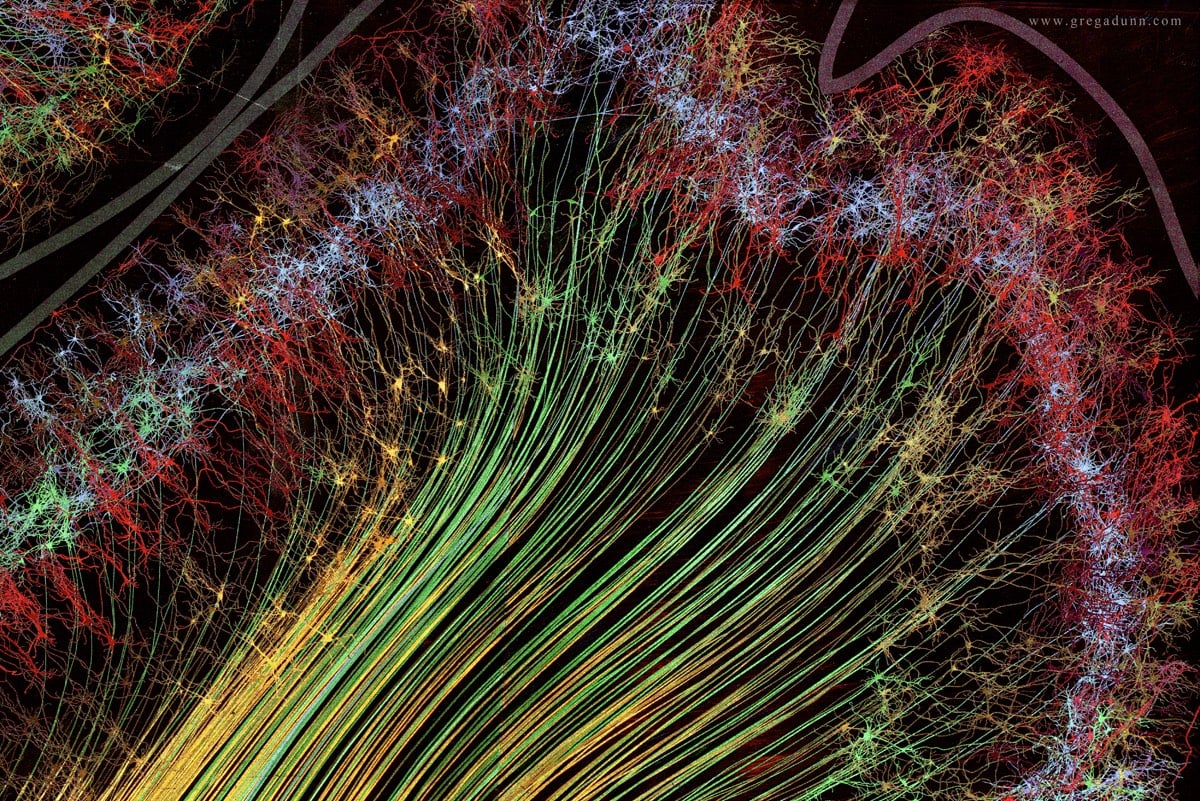

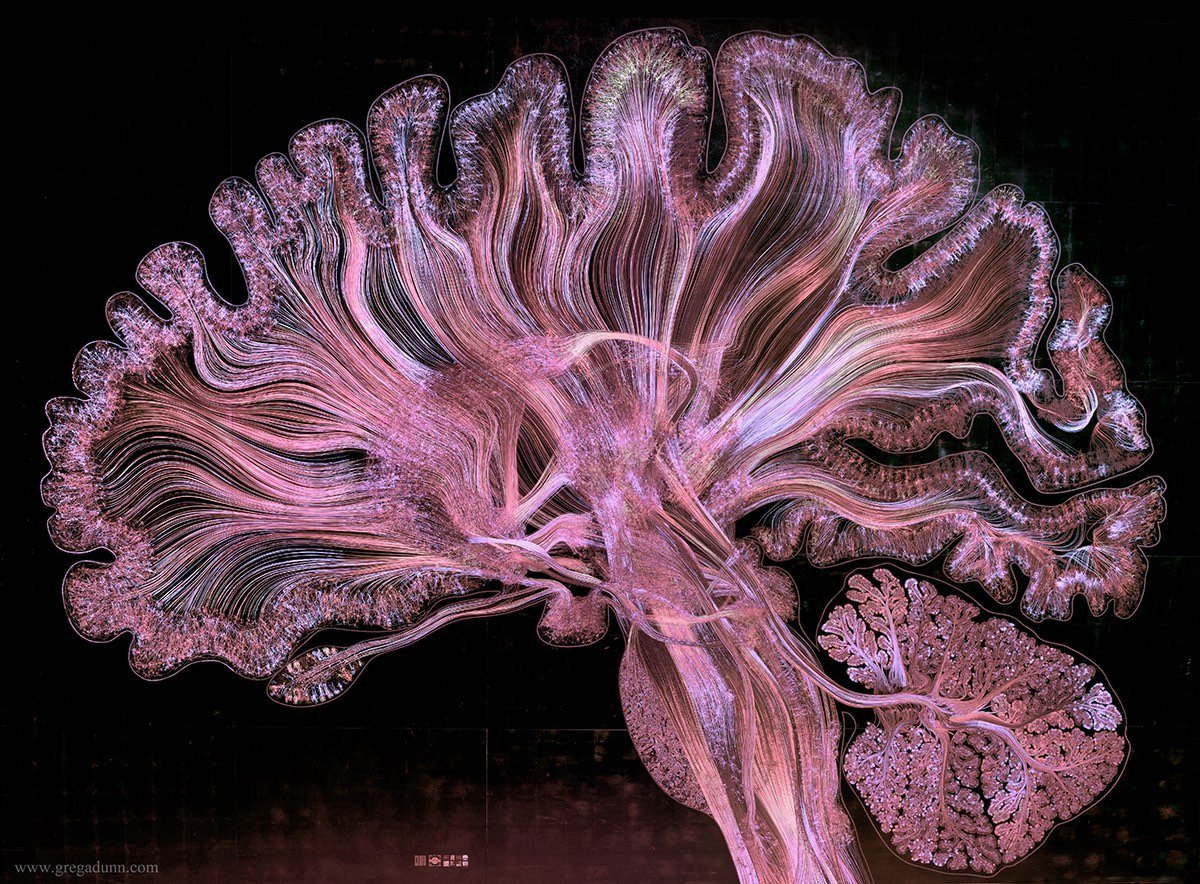

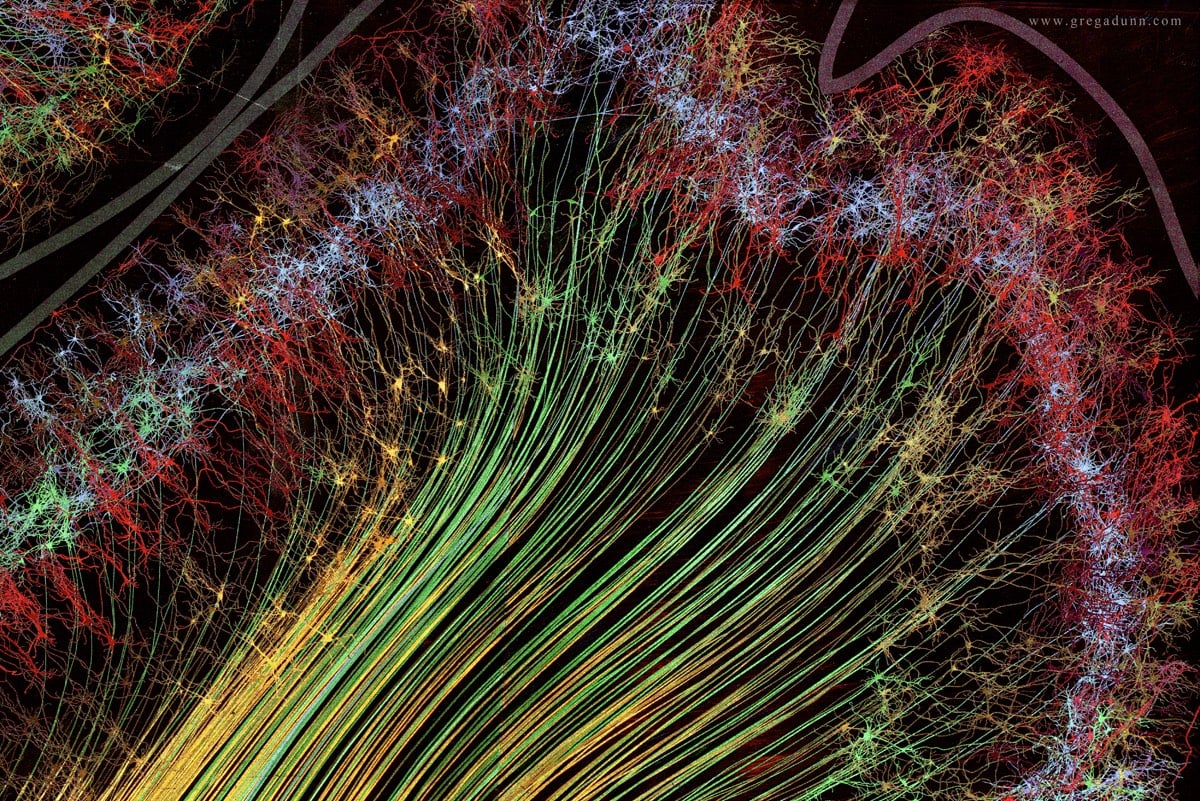

Self Reflected is a project by a pair of artist/scientists that aims to visualize the inner workings of the human brain.

Dr. Greg Dunn (artist and neuroscientist) and Dr. Brian Edwards (artist and applied physicist) created Self Reflected to elucidate the nature of human consciousness, bridging the connection between the mysterious three pound macroscopic brain and the microscopic behavior of neurons. Self Reflected offers an unprecedented insight of the brain into itself, revealing through a technique called reflective microetching the enormous scope of beautiful and delicately balanced neural choreographies designed to reflect what is occurring in our own minds as we observe this work of art. Self Reflected was created to remind us that the most marvelous machine in the known universe is at the core of our being and is the root of our shared humanity.

It’s important to emphasize that these images are not brain scans…they are artistic representations of neural pathways and other structures in the brain.

Self Reflected was designed to be a highly accurate representation of a slice of the brain and is informed by deep neuroscience research to allow it to function as a reliable educational tool as well as a work of art.

Andrea Kuszewski shares some research about human intelligence and offers five techniques anyone can use to increase their IQ.

There are absolutely oodles of terrible things written and promoted on how to “train your brain” to “get smarter”. When I speak of “brain training games”, I’m referring to the memorization and fluency-type games, intended to increase your speed of processing, etc, such as Sudoku, that they tell you to do in your “idle time” (complete oxymoron, regarding increasing cognition). I’m going to shatter some of that stuff you’ve previously heard about brain training games. Here goes: They don’t work. Individual brain training games don’t make you smarter-they make you more proficient at the brain training games.

Now, they do serve a purpose, but it is short-lived. The key to getting something out of those types of cognitive activities sort of relates to the first principle of seeking novelty. Once you master one of those cognitive activities in the brain-training game, you need to move on to the next challenging activity. Figure out how to play Sudoku? Great! Now move along to the next type of challenging game. There is research that supports this logic.

A few years ago, scientist Richard Haier wanted to see if you could increase your cognitive ability by intensely training on novel mental activities for a period of several weeks. They used the video game Tetris as the novel activity, and used people who had never played the game before as subjects (I know-can you believe they exist?!). What they found, was that after training for several weeks on the game Tetris, the subjects experienced an increase in cortical thickness, as well as an increase in cortical activity, as evidenced by the increase in how much glucose was used in that area of the brain. Basically, the brain used more energy during those training times, and bulked up in thickness-which means more neural connections, or new learned expertise-after this intense training. And they became experts at Tetris. Cool, right?

Here’s the thing: After that initial explosion of cognitive growth, they noticed a decline in both cortical thickness, as well as the amount of glucose used during that task. However, they remained just as good at Tetris; their skill did not decrease. The brain scans showed less brain activity during the game-playing, instead of more, as in the previous days. Why the drop? Their brains got more efficient. Once their brain figured out how to play Tetris, and got really good at it, it got lazy. It didn’t need to work as hard in order to play the game well, so the cognitive energy and the glucose went somewhere else instead.

I played football in high school, specifically offensive line, defensive line, and linebacker. So did my older and younger brothers, and my older brother coaches linemen and defense at a high school in Michigan. I started out first in middle school and high school as a defensive specialist, which makes sense given John Madden’s theory of linemen.

Madden used to say that offensive linemen were overwhelmingly big kids who grew up to be big men, who’d always been told not to pick on but to protect kids smaller than them. Defensive linemen, on the other hand, were little kids who grew up fighting with other little kids (and often bigger kids) but who grew up to be big men. That’s what I was: a skinny kid who became a fat adolescent who became a big, strong teenager. (Now I’m a strong, fat writer, so that’s how that turned out.)

Madden said the problem is that offensive linemen still need to be as tough and aggressive as defensive linemen, but they always hold something back. Some of this is part of the rules of football: offensive linemen literally can’t do everything a defensive lineman can do to them. So what Madden would do is take a tackling dummy and let his offensive linemen beat the hell out of it. Punch it, tear it, throw it across the room, it doesn’t matter. Help them get to a point where they’re no longer worried about being over-aggressive.

College football reporter Spencer Hall writes:

You should know this about offensive line coaches: they are large, demanding men with Falstaffian appetites, jutting jaws, and no governors on their speech engines. They eat titanic portions. They cram their lips full of dip in film study like they are loading a mortar. They drink bottled water like parched camels, and in their leisure time would consider a suitcase of beer to be a personal carry-on item for them, and them alone. They are terrifyingly disciplined in the moment, and nap like large breed dogs when allowed.

Now, even if Madden’s amateur psychobiography of linemen were true when he was coaching, it’s not true any more. In the 1990s, coaches got really good at taking tall but relatively slender athletes from every position, bulking them up, and sticking them at offensive line.

In high school, we played this guy named Jon Jansen, who ended up becoming a star offensive tackle for the Washington Redskins, then coming home to Detroit and playing one year for the Lions before becoming an announcer. In high school, he weighed almost 100 pounds less than he did as a pro. He was listed then at 6’8”, 230 lbs, and played tight end and middle linebacker. He was FAST. They moved him all over the field, catching touchdowns and uprooting people. It was chaos.

He went to Michigan, they redshirted him for his freshman year, and came back weighing 300 lbs and playing offensive line. Jansen told Bob Costas that he thought between 15 to 20 percent of NFL players were using illegal performance enhancing drugs, noting that the NFL didn’t then test for human growth hormone. I remember when I was still in high school reading a long profile of the University of Nebraska’s offensive linemen that attributed their huge gains in mass and strength to weightlifting and creatine. Draw your own conclusions about what was happening in pro and college football at the time.

This is all to say that what offensive linemen do in football is not well understood. When the NFL finally started to act on widespread concussions and the resultant uptick in chronic traumatic encephalopathy — if you never have, please read about the life and death of Dave Duerson — they focused on open-field helmet-to-helmet hits and defensive players targeting quarterbacks, running backs, and receivers (so-called “skill positions”). They ignored the constant battering that offensive linemen take, how repeated brain injury poses the greatest risk for long-term problems, how linemen are rewarded for staying on the field and playing through pain, and the ways in which they’re encouraged to both be more aggressive and prioritize someone else’s safety over their own.

Kurt Vonnegut said that his chief objection to life in general was that it was “too easy, when alive, to make horrible mistakes.” This is what offensive line coaches live with: the notion that for every five simple circles drawn on a board, there are a nearly infinite number of possible threats looming out in the theoretical white space. Offensive plays give skill players arrows. Those arrows point down the field toward an endzone, a stopping point, a celebration. Those five simple circles stay on the board in the same place, and are on duty forever.

They are rough men in the business of protection.

Today, Hall has one of the most beautiful, thoughtful, human pieces on offensive linemen I’ve ever read, and which I’ve been quoting here throughout. It’s called “The Business Of Protection,” and subtitled “It Is Never, Ever About You.” It’s a story about Vanderbilt University’s offensive line coach Herb Hand, who suffered a sudden and life-threatening brain hemorrhage waiting in line at a hotel breakfast bar on a recruiting trip. But Hand’s story manages to become equally about football, fatherhood, the brain, the heart, how we defend ourselves from what’s horrible in the things we love, and how we defend the people closest to us from ourselves.

When Hand had to have the impossible conversation — the one where you, with cellphone, stuck in a hospital far away from home, might have to say the last words you ever say to your children — he did what he was trained to do. He told them that he loved them, and that everything would be okay. The second part of that might not have been true at the time. The emergency room doctor certainly didn’t think so, and neither did Hand. But standing between harm and others is what linemen do, even if there’s little hope to be had in the face of numbers, size, and speed. There is a dot on the board, and a shield held against whatever slings and arrows lurk in the ether. It stands against harm until it cannot any longer.

Update: While I was writing this post, the NFL and 4500 former players (about one-third of the 12000 still living) reached a mediation agreement to settle a number of lawsuits over concussions for $765 million.

This figure includes legal fees, medical exams, the cost of noticing former players, and $10 million for research and education on the long-term effect of brain injuries, leaving $675 million to compensate former players who’ve suffered cognitive injuries (or, if dead, their families). The settlement applies only to players who’ve retired by the time court approves its terms. Current players will need a separate agreement to be compensated for existing and future injuries, and the NFL admits no liability.

As Buzzfeed sportswriter Erik Malinowski notes on Twitter: “Holy crap, what a bargain… ESPN pays $1.9 billion *every year* for Monday Night Football. 4,500 ex-players will get 40% of that (once) for decades of head trauma.”

Or rather, protozoan? Toxoplasma gondii is a protozoan parasite which is transmitted from rodents to cats through a crafty mechanism…it makes mice attracted to the smell of cat urine. Mouse goes near cat, cat eats mouse, T. gondii has a new host. From cats, the parasite can jump into humans, where it may be responsible for all sorts of nastiness:

Well, the behavioral influence plays out in a number of strange ways. Toxoplasma infection in humans has been associated with everything from slowed reaction times to a fondness toward cat urine — to more extreme behaviors such as depression and even schizophrenia. And here’s the kicker: Two different research groups have independently shown that Toxo-infected individuals are three to four times as likely of being killed in car accidents due to reckless driving.

And maybe makes us want to invent networking technology and share cool links? In this five-minute talk, Kevin Slavin cleverly connects viral media with T. gondii:

That video was so good, I watched the whole thing twice.

For The Verge, Russell Brandom writes about the increasing use of neural implants to control the symptoms of a variety of diseases, from depression to Parkinson’s to dystonia.

The results are as reliable as flipping a light switch, but even after decades of testing, no one knows exactly why it works. Dr. Kaplitt, the surgeon who installed Rebecca Serdans’ implant, explains it by likening the brain to a collection of electrical circuits. A disorder like dystonia is a failure of those circuits. When you install a brain stimulation device, “it’s presumably blocking abnormal information from getting from one part of the brain to another, or normalizing that information.” But Kaplitt is the first to acknowledge that this is just a theory. “The mechanism by which brain stimulation works is still somewhat unclear and controversial.”

But the lingering questions haven’t slowed down research. There are already patents that would use brain stimulation implants to enhance memory or prevent stuttering, to cure anorexia or bring a person to orgasm. Experimental studies use the device to treat Alzheimer’s disease and drug addiction. Those circuits aren’t as well understood as the circuits governing movement disorders, but the principle is no different. Once you’ve got a line into the circuitry of the brain, Parkinson’s is just the beginning.

Last week I featured a video of a man with Parkinson’s who has a brain pacemaker that allows him to function normally.

There is increasing evidence that a parasite called Toxoplasma gondii, which many humans have gotten from cat feces, can rewire our brains and modify human behavior in unexpected ways.

The parasite, which is excreted by cats in their feces, is called Toxoplasma gondii (T. gondii or Toxo for short) and is the microbe that causes toxoplasmosis-the reason pregnant women are told to avoid cats’ litter boxes. Since the 1920s, doctors have recognized that a woman who becomes infected during pregnancy can transmit the disease to the fetus, in some cases resulting in severe brain damage or death. T. gondii is also a major threat to people with weakened immunity: in the early days of the AIDS epidemic, before good antiretroviral drugs were developed, it was to blame for the dementia that afflicted many patients at the disease’s end stage. Healthy children and adults, however, usually experience nothing worse than brief flu-like symptoms before quickly fighting off the protozoan, which thereafter lies dormant inside brain cells-or at least that’s the standard medical wisdom.

But if Flegr is right, the “latent” parasite may be quietly tweaking the connections between our neurons, changing our response to frightening situations, our trust in others, how outgoing we are, and even our preference for certain scents. And that’s not all. He also believes that the organism contributes to car crashes, suicides, and mental disorders such as schizophrenia. When you add up all the different ways it can harm us, says Flegr, “Toxoplasma might even kill as many people as malaria, or at least a million people a year.”

In a 2008 paper called The Seductive Allure of Neuroscience Explanations, a group from Yale University demonstrated that including neuroscientific information in explanations of psychological phenomena makes the explanations more appealing, even if the neuroscientific info is irrelevant.

Explanations of psychological phenomena seem to generate more public interest when they contain neuroscientific information. Even irrelevant neuroscience information in an explanation of a psychological phenomenon may interfere with people’s abilities to critically consider the underlying logic of this explanation.

I don’t know if I buy this. Perhaps if the authors had explained their results relative to how the human brain functions…

This is incredible…researchers at Berkeley have developed a system that reads people’s minds while they watch a video and then roughly reconstructs what they were watching from thousands of hours of YouTube videos. This short demo shows how it works:

Nishimoto and two other research team members served as subjects for the experiment, because the procedure requires volunteers to remain still inside the MRI scanner for hours at a time.

They watched two separate sets of Hollywood movie trailers, while fMRI was used to measure blood flow through the visual cortex, the part of the brain that processes visual information. On the computer, the brain was divided into small, three-dimensional cubes known as volumetric pixels, or “voxels.”

“We built a model for each voxel that describes how shape and motion information in the movie is mapped into brain activity,” Nishimoto said.

The brain activity recorded while subjects viewed the first set of clips was fed into a computer program that learned, second by second, to associate visual patterns in the movie with the corresponding brain activity.

Brain activity evoked by the second set of clips was used to test the movie reconstruction algorithm. This was done by feeding 18 million seconds of random YouTube videos into the computer program so that it could predict the brain activity that each film clip would most likely evoke in each subject.

Finally, the 100 clips that the computer program decided were most similar to the clip that the subject had probably seen were merged to produce a blurry yet continuous reconstruction of the original movie.

The kicker: “the breakthrough paves the way for reproducing the movies inside our heads that no one else sees, such as dreams and memories”. First time travelling neutrinos and now this…what a time to be alive. (via ★essl)

But only if it’s friendly chat…competitive conversations don’t result in the same improvement. (I don’t think Words With Friends counts either…)

They found that engaging in brief (10 minute) conversations in which participants were simply instructed to get to know another person resulted in boosts to their subsequent performance on an array of common cognitive tasks. But when participants engaged in conversations that had a competitive edge, their performance on cognitive tasks showed no improvement.

“We believe that performance boosts come about because some social interactions induce people to try to read others’ minds and take their perspectives on things,” Ybarra said. “And we also find that when we structure even competitive interactions to have an element of taking the other person’s perspective, or trying to put yourself in the other person’s shoes, there is a boost in executive functioning as a result.”

I’ve noticed this effect with myself but I always thought it was the result of my introversion, i.e. competitive conversations are more stressful and sap energy and mental function more quickly than normal conversations. I know a couple of people who enjoy competitive conversation and I’ve largely steered clear of those interactions since realizing I always felt so blah afterwards.

Tatiana and Krista Hogan are conjoined twins who not only share a bit each other’s skulls but also parts of their brains. So are they two people with two brains & personalities or one person with one brain and two (split) personalities?

Adding to the conundrum, of course, are their linked brains, and the mysterious hints of what passes between them. The family regularly sees evidence of it. The way their heads are joined, they have markedly different fields of view. One child will look at a toy or a cup. The other can reach across and grab it, even though her own eyes couldn’t possibly see its location. “They share thoughts, too,” says Louise. “Nobody will be saying anything,” adds Simms, “and Tati will just pipe up and say, ‘Stop that!’ And she’ll smack her sister.” While their verbal development is delayed, it continues to get better. Their sentences are two or three words at most so far, and their enunciation is at first difficult to understand. Both the family, and researchers, anxiously await the children’s explanation for what they are experiencing.

Lovely long piece in the November issue of Esquire about the brain of Henry Molaison, who you may have previously heard of as Patient H.M., aka the man who lacked the ability to remember anything for more than a couple of minutes. His brain has now been sliced into thin slices in an effort to construct a map of the human brain accurate to neuron-level.

Corkin first met Henry at Brenda Milner’s lab in Montreal in 1962, and over the years, as the mining of his mind has continued, she’s witnessed firsthand how Henry continues to give up riches, broadening our understanding of how memory works. But she’s also keenly aware of Henry’s enduring mysteries, has documented things about him that nobody can quite explain, not yet.

For example, Henry’s inability to recall postoperative episodes, an amnesia that was once thought to be complete, has revealed itself over the years to have some puzzling exceptions. Certain things have managed, somehow, to make their way through, to stick and become memories. Henry knows a president was assassinated in Dallas, though Kennedy’s motorcade didn’t leave Love Field until more than a decade after Henry left my grandfather’s operating room. Henry can hear the incomplete name of an icon — “Bob Dy …” — and complete it, even though in 1953 Robert Zimmerman was just a twelve-year-old chafing against the dead-end monotony of small-town Minnesota. Henry can tell you that Archie Bunker’s son-in-law is named Meathead.

How is this possible?

The piece is written by the grandson of the doctor who removed a portion of Molaison’s brain in an effort to cure his epilepsy.

Jonah Lehrer on what our brains are up to when we’re shopping at Costco.

As I note in How We Decide, this data directly contradicts the rational models of microeconomics. Consumers aren’t always driven by careful considerations of price and expected utility. We don’t look at the electric grill or box of chocolates and perform an explicit cost-benefit analysis. Instead, we outsource much of this calculation to our emotional brain, and rely on relative amounts of pleasure versus pain to tell us what to purchase.

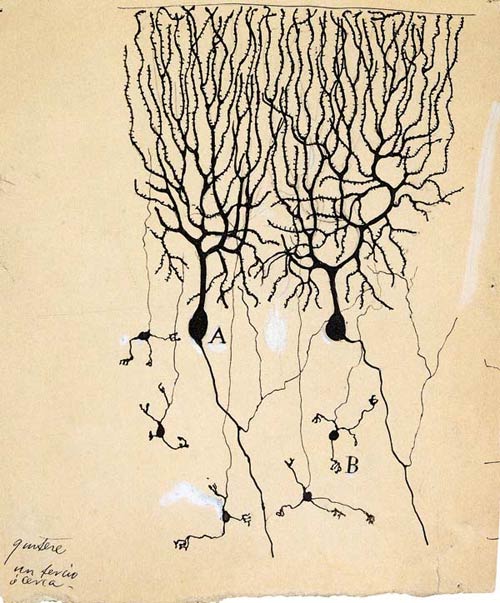

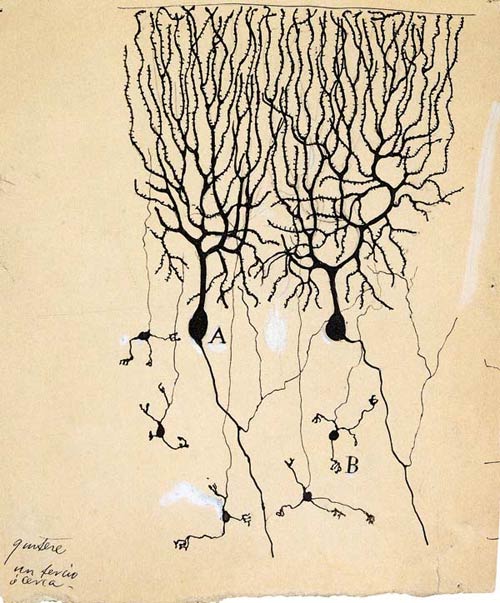

100 years of visualizing the brain, from the discovery of neurons in the 19th century to MRI investigations in the 1990s.

Participants in a sensory deprivation experiment reported having hallucinations after just fifteen minutes.

They then put the participants, one by one, in a dark anechoic chamber which shields all incoming sounds and deadens any noise made by the participant. The room had a ‘panic button’ to stop the experiment but apparently no-one needed to use it.

(via wired)

Our brains have Oprah neurons, Aniston neurons, Eiffel Tower neurons, and Saddam neurons that fire when we see pictures or hear the names of these people and places.

Yet “Oprah neuron” might be a misnomer. The same neuron also fired, albeit much more weakly, to Whoopi Goldberg in one patient. Similarly, Luke Skywalker neurons also responded to Yoda, and those famous Jennifer Aniston neurons flashed to her former Friends co-star Lisa Kudrow. Such connections could explain how our brain relates two abstract concepts, Quian Quiroga says.

And then the Skywalker neurons said, “these aren’t the memories you’re looking for”. Ba doomp.

Humans spend a large amount of time not paying attention to what they are supposed to be doing. This might not be such a bad thing.

The fact that both of these important brain networks become active together suggests that mind wandering is not useless mental static. Instead, Schooler proposes, mind wandering allows us to work through some important thinking. Our brains process information to reach goals, but some of those goals are immediate while others are distant. Somehow we have evolved a way to switch between handling the here and now and contemplating long-term objectives. It may be no coincidence that most of the thoughts that people have during mind wandering have to do with the future.

This jibes well with the picture of the absentmindedness typical of some brilliant people.

If I ever write a book, it might have something to do with the two minds that govern creative expertise: the instinctual unconscious mind (the realm of relaxed concentration) and the thinking mind (the realm of deliberate practice). The tension between these two minds is both the key to and fatal flaw of human creativity. From the world of sports1, here’s Rockies pitcher and college physics major Jeff Francis describing the interplay of the minds on the mound:

Even though I do understand the forces and everything, there’s a separation when I’m pitching. If I throw a good pitch, I know what I did to do it, but there has to be a separation between knowing what I did and knowing why what I did helped the ball do what it did, if that makes any sense at all. If I thought about it on the mound, I’d be really mechanical and trying to be too perfect instead of doing what comes naturally.

But you don’t need to be a physics major to wrestle with the consequences of the conflict between the two minds. After an injury and subsequent surgery, Francis’ instinctual mind works to protect his body from further injury:

Francis repeatedly pulled the ball back in preparation to throw. But as he flashed his arm forward, his hand would, mind unaware, bring the ball back toward his ear rather than at full extension. It was his body essentially shortening the axis of his arm to decrease the force on his shoulder, protecting him from pain. And Francis could not stop it.

After his 10th pitch and first muffled groan of pain, he stopped.

“It’s hurting you?” Murayama said.

“Yeah,” Francis said.

“I can tell. You’re getting out ahead of your arm. Slow down, stay back a little more.”

“Does it look like I’m scared to throw a little?”

“Are you scared?”

“Not consciously.”

To fully recover and regain his former effective pitching motion, Francis will utilize his thinking mind to retrain his unconscious mind through deliberate practice to ignore the injury potential. (thx, adriana)

[1] Most of the examples I’ve cited over the years deal with sports, mostly because professional athletes are among the most trained, scrutinized, studied, and optimized creative workers in the world. For a lot of other professions and endeavors, the data and scrutiny just isn’t as evident. ↩

Since I don’t use Adderall or Provigil, it took me a few days to get through this New Yorker article about neuroenhancing drugs. The main takeaway? Like cosmetic body modification in the 80s, mind modification through prescription chemical means is already commonplace for some and will soon be for many.

Chatterjee worries about cosmetic neurology, but he thinks that it will eventually become as acceptable as cosmetic surgery has; in fact, with neuroenhancement it’s harder to argue that it’s frivolous. As he notes in a 2007 paper, “Many sectors of society have winner-take-all conditions in which small advantages produce disproportionate rewards.” At school and at work, the usefulness of being “smarter,” needing less sleep, and learning more quickly are all “abundantly clear.” In the near future, he predicts, some neurologists will refashion themselves as “quality-of-life consultants,” whose role will be “to provide information while abrogating final responsibility for these decisions to patients.” The demand is certainly there: from an aging population that won’t put up with memory loss; from overwrought parents bent on giving their children every possible edge; from anxious employees in an efficiency-obsessed, BlackBerry-equipped office culture, where work never really ends.

The article is full of wonderful vocabulary. Like the “worried well”: those people who are healthy but go to the doctor anyway to see if they can be made more healthy somehow. Being concerned about how good you’ve got it and attempting to do something about it seems to be another one of those uniquely American phenomena caused by an overabundance of free time & disposable income and the desire to overachieve. See also the impoverished wealthy, the dumb educated, and fat fit.

Henry Molaison — more widely known as H.M. — died last week at 82. Molaison was an amnesiac and the study of his condition revealed much about the workings of the human brain. He lost his long-term memory after a surgery in 1953 and couldn’t remember anything after that for more than 20 seconds or so.

Living at his parents’ house, and later with a relative through the 1970s, Mr. Molaison helped with the shopping, mowed the lawn, raked leaves and relaxed in front of the television. He could navigate through a day attending to mundane details — fixing a lunch, making his bed — by drawing on what he could remember from his first 27 years.

Molly Birnbaum was training to be a chef in Boston when she got hit by a car and lost her sense of smell. Soon after, she moved to New York.

Without the aroma of car exhaust, hot dogs or coffee, the city was a blank slate. Nothing was unbearable and nothing was especially beguiling. Penn Station’s public restroom smelled the same as Jacques Torres’s chocolate shop on Hudson Street. I knew that New York possessed a further level of meaning, but I had no access to it, and I worked hard to ignore what I could not detect.

Update: Here’s another take on anosmia and Birnbaum’s article.

In the first year of my recovery, I regularly visited both a neurologist and neuropsychologist who both disputed this claim. They told me that smell and taste, although related, are essentially exclusive. If anything, my neuropsychologist told me, smell is more integrated with memory.

In my experience, I’ve found this to be true: I have not lost my love of food; in fact, I feel like my appreciation for flavor combinations have been heightened. Milk does not taste like a “viscous liquid” to me and ice cream is certainly more than just “freezing.” Similarly, a good wine is more than tasting the acids, a memorable dessert is more than simply sweet, and french fries do not taste like salty nothing-sticks.

Due to a hand tremor, musician Eddie Adcock was having trouble playing the banjo. During the surgery to fix the problem, the doctors had Adcock play his banjo to isolate the problem in his brain and then they made the repair. Video here. (via delicious ghost)

Jonah Lerher on daydreaming and the human brain’s default network. Creativity, especially with regard to children, might be stifled by too little daydreaming and too much television.

After monitoring the daily schedule of the children for several months, Belton came to the conclusion that their lack of imagination was, at least in part, caused by the absence of “empty time,” or periods without any activity or sensory stimulation. She noticed that as soon as these children got even a little bit bored, they simply turned on the television: the moving images kept their minds occupied. “It was a very automatic reaction,” she says. “Television was what they did when they didn’t know what else to do.”

The problem with this habit, Belton says, is that it kept the kids from daydreaming. Because the children were rarely bored — at least, when a television was nearby — they never learned how to use their own imagination as a form of entertainment. “The capacity to daydream enables a person to fill empty time with an enjoyable activity that can be carried on anywhere,” Belton says. “But that’s a skill that requires real practice. Too many kids never get the practice.”

But television isn’t the default network that Lehrer is referring to:

Every time we slip effortlessly into a daydream, a distinct pattern of brain areas is activated, which is known as the default network. Studies show that this network is most engaged when people are performing tasks that require little conscious attention, such as routine driving on the highway or reading a tedious text. Although such mental trances are often seen as a sign of lethargy — we are staring haplessly into space — the cortex is actually very active during this default state, as numerous brain regions interact. Instead of responding to the outside world, the brain starts to contemplate its internal landscape. This is when new and creative connections are made between seemingly unrelated ideas.

Basketball players are more skilled than even keen observers of the game (sportswriters and coaches) when predicting whether a shot will go in the basket or not.

Not surprisingly, the players were significantly better at predicting whether or not the shot would go in. While they got it right more than two-thirds of the time, the non-playing experts (i.e., the coaches and writers) only got it right 44 percent of the time.

It’s thought that the brains of the players act as though they are actually taking the shot.

In other words, when professional basketball players watch another player take a shot, mirror neurons in their pre-motor areas might light up as if they were taking the same shot. This automatic empathy allows them to predict where the ball will end up before the ball is even in the air.

Jonah Lerher, author of Proust Was a Neuroscientist, has a piece in the New Yorker this week (not online1) about how the process of insight works in the brain. The main takeaway is that insight comes easiest when our brains are relaxed and not focused on too much detail so that it is able to look for more general associations between seemingly disparate ideas.

Kounios tells a story about an expert Zen meditator who took part in one of the C.R.A. insight experiments. At first, the meditator couldn’t solve any of the insight problems. “This Zen guy went through thirty or so of the verbal puzzles and just drew a blank,” Kounios said. “He was used to being very focussed, but you can’t solve these problems if you’re too focussed.” Then, just as he was about to give up, he started solving one puzzle after another, until, by the end of the experiment, he was getting them all right. It was an unprecedented streak. “Normally, people don’t get better as the task goes along,” Kounios said. “If anything, they get a little bored.” Kounios believes that the dramatic improvement of the Zen meditator came from his paradoxical ability to focus on not being focussed, so that he could pay attention to those remote associations in the right hemisphere. “He had the cognitive control to let go,” Kounios said. “He became an insight machine.”

[1] There’s a samizdat PDF of the article here. ↩

I try not to miss any of Atul Gawande’s New Yorker articles, but his piece on itching from this week’s issue is possibly the most interesting thing I’ve read in the magazine in a long time. He begins by focusing on a specific patient for whom compulsive itching has become a very serious problem. (Warning, this quote is pretty disturbing…but don’t let it deter you from reading the article.)

…the itching was so torturous, and the area so numb, that her scratching began to go through the skin. At a later office visit, her doctor found a silver-dollar-size patch of scalp where skin had been replaced by scab. M. tried bandaging her head, wearing caps to bed. But her fingernails would always find a way to her flesh, especially while she slept.

One morning, after she was awakened by her bedside alarm, she sat up and, she recalled, “this fluid came down my face, this greenish liquid.” She pressed a square of gauze to her head and went to see her doctor again. M. showed the doctor the fluid on the dressing. The doctor looked closely at the wound. She shined a light on it and in M.’s eyes. Then she walked out of the room and called an ambulance. Only in the Emergency Department at Massachusetts General Hospital, after the doctors started swarming, and one told her she needed surgery now, did M. learn what had happened. She had scratched through her skull during the night — and all the way into her brain.

From there, Gawande pulls out to tell us about itching/scratching (the two are inseparable), then about a recent theory of how our brains perceive the world (“visual perception is more than ninety per cent memory and less than ten per cent sensory nerve signals”), and finally about a fascinating therapy initially developed for those who experience phantom limb pain called mirror treatment.

Among them is an experiment that Ramachandran performed with volunteers who had phantom pain in an amputated arm. They put their surviving arm through a hole in the side of a box with a mirror inside, so that, peering through the open top, they would see their arm and its mirror image, as if they had two arms. Ramachandran then asked them to move both their intact arm and, in their mind, their phantom arm-to pretend that they were conducting an orchestra, say. The patients had the sense that they had two arms again. Even though they knew it was an illusion, it provided immediate relief. People who for years had been unable to unclench their phantom fist suddenly felt their hand open; phantom arms in painfully contorted positions could relax. With daily use of the mirror box over weeks, patients sensed their phantom limbs actually shrink into their stumps and, in several instances, completely vanish. Researchers at Walter Reed Army Medical Center recently published the results of a randomized trial of mirror therapy for soldiers with phantom-limb pain, showing dramatic success.

Crazy! Gawande documents and speculates about other applications of this treatment, including using virtual reality representations instead of mirrors and utilizing multiple mirrors for treatment of M.’s itchy scalp. Anyway, read the whole thing…highly recommended.

Rampant speculation from Jonah Lehrer on why people care so much when they watch overpaid athletes play sports. It is, perhaps, all about mirror neurons:

“The main functional characteristic of mirror neurons is that they become active both when the monkey makes a particular action (for example, when grasping an object or holding it) and when it observes another individual making a similar action.” In other words, these peculiar cells mirror, on our inside, the outside world; they enable us to internalize the actions of another. They collapse the distinction between seeing and doing.

This suggests that when I watch Kobe glide to the basket for a dunk, a few deluded cells in my premotor cortex are convinced that I, myself, am touching the rim. And when he hits a three pointer, my mirror neurons light up as I’ve just made the crucial shot. They are what bind me to the game, breaking down that 4th wall separating fan from player. I’m not upset because my team lost: I’m upset because it literally feels like I lost, as if I had been on the court.

One of the interesting findings of Elizabeth Spelke’s Harvard baby brain research lab is that while babies prefer looking at pictures of people of their own race over other races, they are much more biased about language.

‘They like toys more that are associated with someone who has spoken their language. They prefer to eat foods offered to them by a native speaker compared to a speaker of a foreign language. And older children say that they want to be friends with someone who speaks in their native accent.’ Accents and vernacular, far more than race, seem to influence the people we like. ‘Children would rather be friends with someone who is from a different race and speaks with a native accent versus somebody who is their own race but speaks with a foreign accent.’

For scientist Dr. Anne Adams (and composer Maurice Ravel), a rare disease called frontotemporal dementia caused a burst of creativity.

The disease apparently altered circuits in their brains, changing the connections between the front and back parts and resulting in a torrent of creativity. “We used to think dementias hit the brain diffusely,” Dr. Miller said. “Nothing was anatomically specific. That is wrong. We now realize that when specific, dominant circuits are injured or disintegrate, they may release or disinhibit activity in other areas. In other words, if one part of the brain is compromised, another part can remodel and become stronger.”

Some of Adams’ work can be seen here…her portrait of pi contains a touch of synesthesia. (thx, cory)

This talk by neuroanatomist Jill Bolte Taylor was universally considered the best talk at the TED conference last month. In it, she describes the lessons she learned from studying her stroke from inside her own head as it was happening.

And in that moment my right arm went totally paralyzed by my side. And I realized, “Oh my gosh! I’m having a stroke! I’m having a stroke!” And the next thing my brain says to me is, “Wow! This is so cool. This is so cool. How many brain scientists have the opportunity to study their own brain from the inside out?”

Older posts

Socials & More