kottke.org posts about literature

Ignore the m-word and read Sally Rooney: How Should a Millennial Be?

As a portrait of young people today, Rooney’s books are remarkably precise—she captures meticulously the way a generation raised on social data thinks and talks. Rooney’s characters love to announce where they fall on the matrix of taste and social awareness. They read Patricia Lockwood and watch Greta Gerwig movies; they read Twitter for jokes. Decisions are made according to typologies. There’s built-in social meaning for any interest or opinion. “No one who likes Yeats is capable of human intimacy,” says Nick, and I was reminded of friends swiping left on Tinder, rejecting dates because their favorite movies signaled unquestionable incompatibility.

The review’s later parenthetical reference to Andrew Martin’s debut novel is noteworthy. I read Rooney’s Conversations with Friends and Martin’s Early Work in close succession last year and see similarities in perspective. I’d also include Benjamin Lytal’s A Map of Tulsa in that grouping. All are told from a fresh standpoint, with varying degrees of lush language, and romantic turmoil only bearable among a certain age group, with a healthy dose of capital-L Literary references. None are stodgy nor dated despite some social media observations.

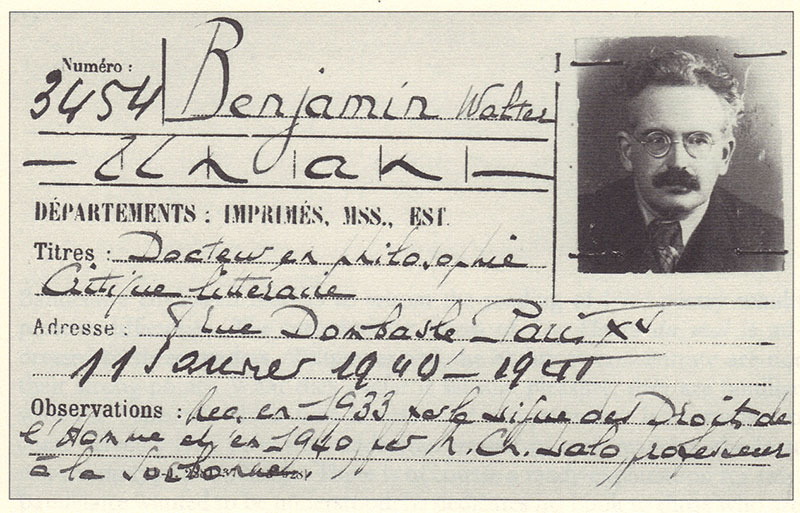

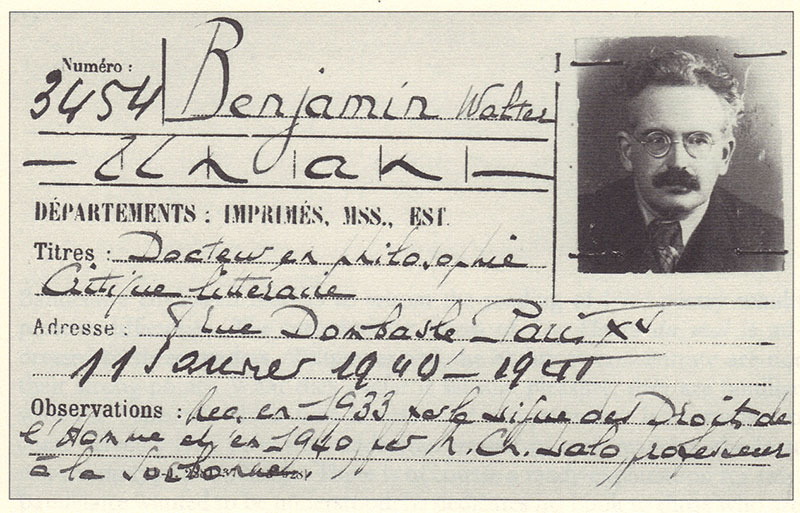

This Aeon essay by Giorgio van Straten, “Lost in Migration,” is excerpted from a book titled In Search of Lost Books, which explains its fascination with a book that’s fascinated many people, a manuscript carried in a briefcase by Walter Benjamin at the end of his life which has never been identified or located and probably did not survive him.

I’ve always been a little turned off by the obsession with this manuscript among Benjamin fans and readers. There’s something so shattering to me about the end of Benjamin’s life, and how he died, that it feels not just trivial, but almost profane to geek out over the imaginary contents of a book he might have left behind. I feel the same way about dead musicians. It’s all just bad news.

Luckily, though, this essay does contain a compelling and concise account of the end of Benjamin’s life.

First Benjamin fled Paris, which had been bombed and was nearly about to be invaded by the German army, for Marseilles:

Benjamin was not an old man - he was only 48 years old - even if the years weighed more heavily at the time than they do now. But he was tired and unwell (his friends called him ‘Old Benj’); he suffered from asthma, had already had one heart attack, and had always been unsuited to much physical activity, accustomed as he was to spending his time either with his books or in erudite conversation. For him, every move, every physical undertaking represented a kind of trauma, yet his vicissitudes had over the years necessitated some 28 changes of address. And in addition he was bad at coping with the mundane aspects of life, the prosaic necessities of everyday living.

Hannah Arendt repeated with reference to Benjamin remarks made by Jacques Rivière about Proust:

He died of the same inexperience that permitted him to write his works. He died of ignorance of the world, because he did not know how to make a fire or open a window.

before adding to them a remark of her own:

With a precision suggesting a sleepwalker his clumsiness invariably guided him to the very centre of a misfortune.

Now this man seemingly inept in the everyday business of living found himself having to move in the midst of war, in a country on the verge of collapse, in hopeless confusion.

From Marseilles he hoped to reach Spain, since, as a German refugee, he did not have the proper exit papers.

The next morning he was joined soon after daybreak by his travelling companions. The path they took climbed ever higher, and at times it was almost impossible to follow amid rocks and gorges. Benjamin began to feel increasingly fatigued, and he adopted a strategy to make the most of his energy: walking for 10 minutes and then resting for one, timing these intervals precisely with his pocket-watch. Ten minutes of walking and one of rest. As the path became progressively steeper, the two women and the boy were obliged to help him, since he could not manage by himself to carry the black suitcase he refused to abandon, insisting that it was more important that the manuscript inside it should reach America than that he should.

A tremendous physical effort was required, and though the group found themselves frequently on the point of giving up, they eventually reached a ridge from which vantage point the sea appeared, illuminated by the sun. Not much further off was the town of Portbou: against all odds they had made it.

Spain had changed its policy on refugees just the day before:

[A]nyone arriving ‘illegally’ would be sent back to France. For Benjamin this meant being handed over to the Germans. The only concession they obtained, on account of their exhaustion and the lateness of the hour, was to spend the night in Portbou: they would be allowed to stay in the Hotel Franca. Benjamin was given room number 3. They would be expelled the next day.

For Benjamin that day never came. He killed himself by swallowing the 15 morphine tablets he had carried with him in case his cardiac problems recurred.

This is how one of the greatest writers and thinkers of the twentieth century was lost to us, forever.

Dr. Time is a nickname some friends gave me within the last couple of years. Its origin is silly, as nicknames’ often are: “Tim” autocorrects to “Time,” so hasty typing in a private Slack turns into a pseudo-persona. I also like that it’s a slant rhyme on Doctor Doom, my favorite supervillain. And in case you haven’t noticed, I have a pretty strong interest in time.

When Jason and I started talking about different ways we could collaborate on the site, the wildest was his suggestion that I write an advice column called “Ask Dr. Time.” I laughed out loud. The proposition was absurd. I don’t want to wade into the disaster that is my life, but the idea that anyone would ask me for personal advice, and that I would be foolish enough to give it, was laughable. Let’s just say I’ve made some poor choices and had some sad circumstances, and leave it at that.

One of those poor choices, however, was spending a lot of time studying philosophy, literature, mathematics, history, and metaphysics. Jason eventually got me to see that “Ask Dr. Time” didn’t have to be an advice column in a conventional sense. What if readers had problems that didn’t require common sense or finely honed interpersonal skills, but an ability to make sense of abstruse reasoning? What if they didn’t need a fancy Watson but an armchair Wittgenstein? What if kottke.org hosted the first metaphysical advice columnist? That proposition is still absurd, but it’s absurd in an interesting way. And “absurd in an interesting way” is what Dr. Time is all about. Not practical solutions, but philosophical entanglements and disentanglings. That I could do.

So on Fridays, from time to time. Dr. Time is going to appear, to answer reader questions that admit of no answer — sometimes here on Kottke.org, and sometimes at the Kottke newsletter I write, Noticing. For this particular entry, the blog seemed more appropriate — and besides, the newsletter was full.

Our first question actually comes from Jason, who, like many of us, is enjoying Emily Wilson’s magnificent contemporary translation of Homer’s The Odyssey.

Jason was struck by this passage in the introduction, on the oral roots and possible oral composition of the Homeric epics:

The state of Homeric scholarship changed radically and permanently in the early 1930s, when a young American classicist named Milman Parry traveled to the then-Yugoslavia with recording equipment and began to study the living oral tradition of illiterate and semiliterate Serbo-Croat bards, who told poetic folk tales about the mythical and semihistorical events of the Serbian past. Parry died at the age of thirty-three from an accidental gunshot, and research was further interrupted by the Second World War. But Parry’s student Albert Lord continued his work on Homer, and published his findings in 1960, under the title The Singer of Tales. Lord and Parry proved definitively that the Homeric poems show the mark of oral composition.

The “Parry-Lord hypothesis” was that oral poetry, from every culture where it exists, has certain distinctive features, and that we can see these features in the Homeric poems—specifically, in the use of formulae, which enable the oral poet to compose at the speed of speech. A writer can pause for as long as she or he wants, to ponder the most fitting adjective for a particular scene; she can also go back and change it afterwards, on further reflection—as in the famous anecdote about Oscar Wilde, who labored all morning to add a comma, and worked all afternoon taking it out. Oral performers do not use commas, and do not have the luxury of time to ponder their choice of words. They need to be able to maintain fluency, and formulaic features make this possible.

Subsequent studies, building on the work of Parry and Lord, have shown that there are marked differences in the ways that oral and literate cultures think about memory, originality, and repetition. In highly literate cultures, there is a tendency to dismiss repetitive or formulaic discourse as cliche; we think of it as boring or lazy writing. In primarily oral cultures, repetition tends to be much more highly valued. Repeated phrases, stories, or tropes can be preserved to some extent over many generations without the use of writing, allowing people in an oral culture to remember their own past. In Greek mythology, Memory (Mnemosyne) is said to be the mother of the Muses, because poetry, music, and storytelling are all imagined as modes by which people remember the times before they were born.

Wilson goes on to consider the implications of the poem’s origins in orality for trying to figure out if there really was an historical Homer, a single author of the great poems — and if so, whether and how we could tell. She also rightly gives some of the Homeric critics a shot in the ribs for their assumptions about oral cultures, which tended not to be drawn from very many historical sources: if Parry had visited with Somali bards rather than singers from the Balkans, he may have come away with very different conclusions.

Orality, even primary orality, before any writing whatsoever, exists in rich and wide varieties. And Homeric orality was probably not so primary as all that: it’s exciting and accessible to us exactly because it’s on that seam between a dominant oral culture and an emerging written one.

Jason’s question is a little bit different. Since I don’t quite remember what he originally asked, I’ll do a very oral-to-literate thing and paraphrase. What do we make of digital media forms like Twitter that are highly interactive and speechlike? Is this a kind of return to orality? Is there a little bit of the Homeric world in our smartphones, where we both “chat” with our mouths and our thumbs?

The answer to this last question is Yes — but in a different way from how it might first appear. We’re a little Homeric because we’re also on the cusp of multiple media regimes, making a great transformation of great civilizations. However, with some exceptions, we’re not especially oral. We’re exceedingly literate. We’re making written language and literacy do things even our grandparents, raised in the age of industrial print, wouldn’t quite recognize.

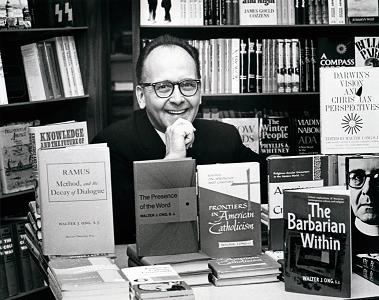

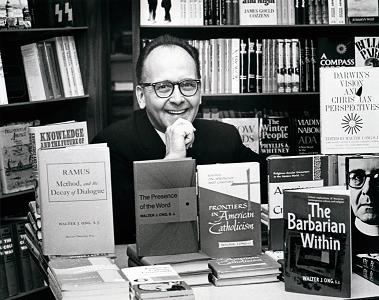

I used the phrase “primary orality” earlier, and it’s one I borrow from Walter Ong. Ong was a Jesuit priest and influential scholar of language and literature. He was very much in this Milman Parry tradition of thinking about the relationship of orality and literacy to forms of thought and shared culture. You can draw a line from Parry to Eric Havelock, who wrote the influential Preface to Plato, and to communications scholars Harold Innis and Marshall McLuhan, and from there to Ong, Hugh Kenner, Northrop Frye, and a number of the more dominant media thinkers of the twentieth century in the English language.

What Ong helped conceptualize and popularize, especially in his book Orality and Literacy, was that in cultures with no tradition of literacy, orality had a fundamentally different character from those where literacy was dominant. It’s different again in cultures where literacy is known but scarce.

For instance, we tend to associate writing with official culture. We ask for papers, and papers are official. An official record has an official written form that unofficial forms of writing or any form of speech are considered less proper. Literacy and paper are also widespread enough that we expect everyone to have some paper.

A nonliterate culture, for obvious reasons, doesn’t work that way. You need an entirely different system of conventions to differentiate formal from informal, permanent from ephemeral — those concepts might not have even hold the same relationships to each other. One of those conventions, so common that it even exists outside the species, is song. And the songs we attribute to Homer are, for us, who exist in their shadow, the best songs ever written.

In the Romantic version of the Parry-Lord thesis, the oral world of Homer is a lost paradise, and our post-literate one, a fallen world of lesser creatures. This probably borrows too much from how Homeric poets feigned to feel about themselves relative to the Mycenaean civilizations that preceded them, and how the classical Greeks appeared to feel about Homer. It’s all representation of lost paradises all the way down.

Ong dodges more of this nostalgia than he’s usually given credit for, but there’s still an element of it, one that he sometimes seems to regret. (Regret for Nostalgia would make a good biography title for Ong.) In his case, it’s conflated with a methodological problem — how do we talk about primary orality (the orality of cultures with no knowledge of writing) in a culture that’s saturated with writing, whose entire intellectual edifice is premised on writing? In fact, oral culture never goes away: it persists in its own logic and suborns the existence of writing to its own ends.

Ong’s great example is classical and medieval rhetoric, which used books, book-based scholarly culture, and book-based modes of training to elevate oral argument to exquisite sophistication. You might also look at hip-hop, which seamlessly blends freestyle vocals, dance, graffiti, and turntable manipulation to create new forms of recording and improvisation. It’s never an either-or, but a constant restructuring.

So, as to the original question: are Twitter and texting new forms of orality? I have a simple answer and a complex one, but they’re both really the same.

The first answer is so lucid and common-sense, you can hardly believe that it’s coming from Dr. Time: if it’s written, it ain’t oral. Orality requires speech, or song, or sound. Writing is visual. If it’s visual and only visual, it’s not oral.

The only form of genuine speech that’s genuinely visual and not auditory is sign language. And sign language is speech-like in pretty much every way imaginable: it’s ephemeral, it’s interactive, there’s no record, the signs are fluid. But even most sign language is at least in part chirographic, i.e., dependent on writing and written symbols. At least, the sign languages we use today: although our spoken/vocal languages are pretty chirographic too.

Writing, especially writing in a hyperliterate society, involves a transformation of the sensorium that privileges vision at the expense of hearing, and privileges reading (especially alphabetic reading) over other forms of visual interpretation and experience. It makes it possible to take in huge troves of information in a limited amount of time. We can read teleprompters and ticker-tape, street signs and medicine bottles, tweets and texts. We can read things without even being aware we’re reading them. We read language on the move all day long: social media is not all that different.

Now, for a more complicated explanation of that same idea, we go back to Father Ong himself. For Ong, there’s a primary orality and a secondary orality. The primary orality, we’ve covered; secondary orality is a little more complicated. It’s not just the oral culture of people who’ve got lots of experience with writing, but of people who’ve developed technologies that allow them to create new forms of oral communication that are enabled by writing.

The great media forms of secondary orality are the movies, television, radio, and the telephone. All of these are oral, but they’re also modern media, which means the media reshapes it in its own image: they squeeze your toothpaste through its tube. But they’re also transformative forms of media in a world that’s dominated by writing and print, because they make it possible to get information in new ways, according to new conventions, and along different sensory channels.

Walter Ong died in 2003, so he never got to see social media at its full flower, but he definitely was able to see where electronic communications was headed. Even in the 1990s, people were beginning to wonder whether interactive chats on computers fell under Ong’s heading of “secondary orality.” He gave an interview where he tried to explain how he saw things — as far as I know, relatively few people have paid attention to it (and the original online source has sadly linkrotted away)1:

“When I first used the term ‘secondary orality,’ I was thinking of the kind of orality you get on radio and television, where oral performance produces effects somewhat like those of ‘primary orality,’ the orality using the unprocessed human voice, particularly in addressing groups, but where the creation of orality is of a new sort. Orality here is produced by technology. Radio and television are ‘secondary’ in the sense that they are technologically powered, demanding the use of writing and other technologies in designing and manufacturing the machines which reproduce voice. They are thus unlike primary orality, which uses no tools or technology at all. Radio and television provide technologized orality. This is what I originally referred to by the term ‘secondary orality.’

I have also heard the term ‘secondary orality’ lately applied by some to other sorts of electronic verbalization which are really not oral at all—to the Internet and similar computerized creations for text. There is a reason for this usage of the term. In nontechnologized oral interchange, as we have noted earlier, there is no perceptible interval between the utterance of the speaker and the hearer’s reception of what is uttered. Oral communication is all immediate, in the present. Writing, chirographic or typed, on the other hand, comes out of the past. Even if you write a memo to yourself, when you refer to it, it’s a memo which you wrote a few minutes ago, or maybe two weeks ago. But on a computer network, the recipient can receive what is communicated with no such interval. Although it is not exactly the same as oral communication, the network message from one person to another or others is very rapid and can in effect be in the present. Computerized communication can thus suggest the immediate experience of direct sound. I believe that is why computerized verbalization has been assimilated to secondary ‘orality,’ even when it comes not in oral-aural format but through the eye, and thus is not directly oral at all. Here textualized verbal exchange registers psychologically as having the temporal immediacy of oral exchange. To handle [page break] such technologizing of the textualized word, I have tried occasionally to introduce the term ‘secondary literacy.’ We are not considering here the production of sounded words on the computer, which of course are even more readily assimilated to ‘secondary orality’” (80-81).

So tweets and text messages aren’t oral. They’re secondarily literate. Wait, that sounds horrible! How’s this: they’re artifacts and examples of secondary literacy. They’re what literacy looks like after television, the telephone, and the application of computing technologies to those communication forms. Just as orality isn’t the same after you’ve introduced writing, and manuscript isn’t the same after you’ve produced print, literacy isn’t the same once you have networked orality. In this sense, Twitter is the necessary byproduct of television.

Now, where this gets really complicated is with stuff like Siri and Alexa, and other AI-driven, natural-language computing interfaces. This is almost a tertiary orality, voice after texting, and certainly voice after interactive search. I’d be inclined to lump it in with secondary orality in that broader sense of technologically-mediated orality. But it really does depend how transformative you think client- and cloud-side computing, up to and including AI, really are. I’m inclined to say that they are, and that Alexa is doing something pretty different from what the radio did in the 1920s and 30s.

But we have to remember that we’re always much more able to make fine distinctions about technology deployed in our own lifetime, rather than what develops over epochs of human culture. Compared to that collision of oral and literate cultures in the Eastern Mediterranean that gave us poetry, philosophy, drama, and rhetoric in the classical period, or the nexus of troubadours, scholastics, printers, scientific meddlers and explorers that gave us the Renaissance, our own collision of multiple media cultures is probably quite small.

But it is genuinely transformative, and it is ours. And some days it’s as charming to think about all the ways in which our heirs will find us completely unintelligible as it is to imagine the complex legacy we’re bequeathing them.

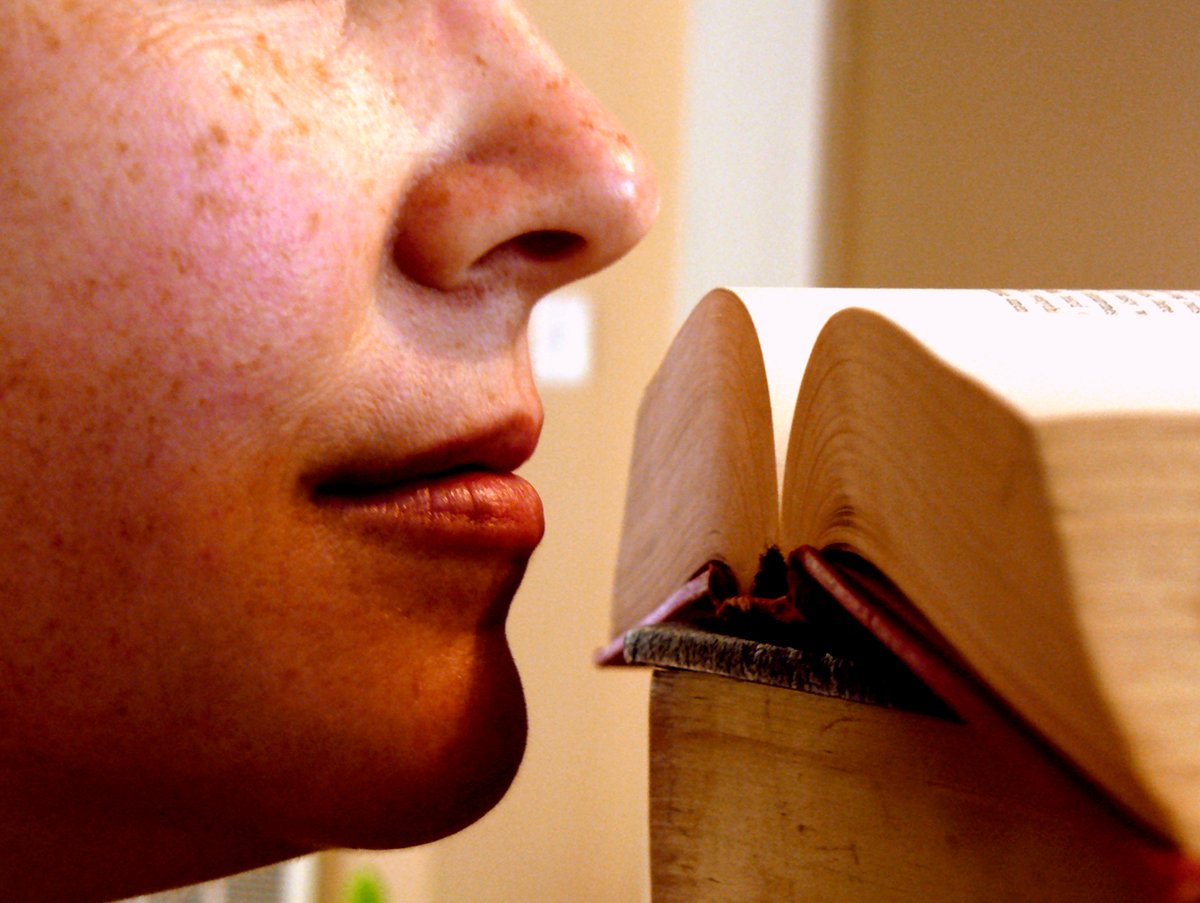

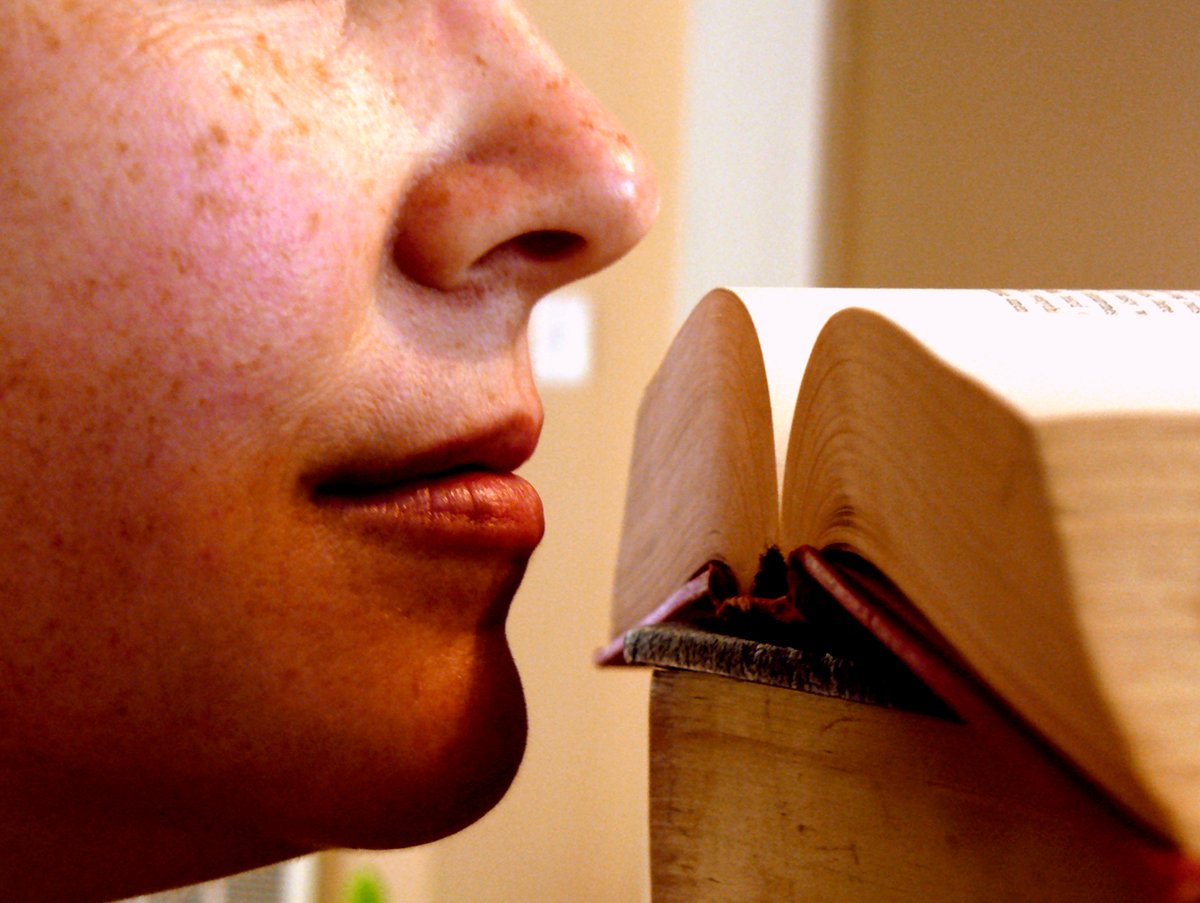

Earlier this week, I wrote about how literature transforms text into voice and experience — the word made flesh, basically. But the examples I used of “experience” focused, as such discussions often do, on the image: in other words, vision. And it should be obvious that embodied experience is much richer than that. Experience is tactile and spatial, mental and auditory. It’s not photography; it has a taste and a temperature.

Smell might be the most ineffable feature of animal life. Words and food go through the mouth, so language and taste have a more intimate relationship than it does with smell, the seat of breath. The language of taste colonizes other experiences almost as much as vision does. The word “taste” alone testifies to this.

Smell is more delicate, and harder to explain or describe. In conversation, we have words, sure, but mostly communicate smell through grunts or exaggerated faces. Or again, through analogy with taste — with which it’s closely linked.

It’s probably safe to say that the deeper we move into language, especially the more cerebral side of language, the more alienated we become from smell. The world stops being beings among beings, and becomes res extensa.

Still, writers have to try to find a way to square this circle. How do you do it? Do you try to dominate the reader and go all pulpy, immersive, and visceral? Or do you write around it: using smell as a starting point for memory and sensibility, counting on readers to fill in the gaps?

Consider this passage from Helena Fitzgerald and Rachel Syme’s perfume newsletter, The Dry Down:

What we know as “leather” in scent is really an intellectual idea and not a true distillation of anything; it’s the ingenious concept of covering up the smell of dried-out skins with flowers so that these skins can be sold as luxury goods. The real story behind this idea is one of violence and hideous smells: many of the bloody, gut-strewn tanneries of 16th century France were located in close proximity to the Grasse perfume distilleries (where flowers were also sent to their deaths by hot steam or being suffocated in tallow), so close that the air in town became a heady melange of life and death, all mixed up; it must have smelled overwhelming and nauseating and murderous and terrifying (but then, that was the way most of Europe smelled before sewage systems were invented). Legend has it that this intermingling began when Catherine de Medici came over from Italy to rule France in 1547 and asked the Grasse tanners to start scenting their gloves with jasmine to rid them of the putrid scent of the kill; oiled gant then became de rigeur among aristocratic French try-hards…

It was all the rage to pretend that the dead thing you were wearing on your hands arrived to your palace smelling like a rose; and that’s still pretty much where we are with leather. When you close your eyes and think of what a pure leather smells like to you (a S&M dungeon? Frye boots crunching over autumn leaves? A tawny satchel worn down by years of use?), what you must know is that whatever you are imagining is an artificial smell, a clever creation passed down from some genius in post-classical Provence who conjured up a way to give a pushy queen exactly what she desired. That smell of suede, of the inside of a new handbag, that’s always a damn lie. Actual leather smells like rotting, like wretching, like rigor mortis. It’s not pleasant, but then, luxury is about high-stakes deceit, about playing hide-the-damage inside buttery language and astronomical price tags.

Later, Rachel writes about a perfumer she met names Stephen Dirkes:

When we first met, Dirkes brought me real ambergris to smell, waving an antique tin of pungent whale belly under my nose; I will never forget it. He also brought civet and castoreum, true animal secretions, to show me how very close to death we are at all times when we love perfume. These materials don’t smell fresh or vibrant, they smell like the other side of the bell curve, the decline, the decay. Stephen was the first person to tell me about the Grasse tanneries, and I remember he said something like “There is a death drive in these smells,” and that perfume is often an expression of self-loathing as much as it is of self-love. And that’s what leather is to me. You can’t wear it unless deep down, you loathe humanity as much as you love it, unless you acknowledge the gory hidden history of our hedonism, unless you know, in your heart, that opulence is a cover-up job.

Helena, in the same newsletter, writes the following beautiful observation about the smell of books:

What makes old book smell work as a scent that can be worn on the skin is a leather note, and the other smells that mingle in a university library, the kind of place where more hidden corners, more secret hide-outs, reveal themselves the longer you stay - the undercurrent of cooped-up people’s sweat, a hum of anxiety and ambition and want, the waft of the snacks that somebody snuck in, the sudden out-of-place hope when someone cracks a window, the residue of cigarettes clinging to people’s hair and clothes when they come back in from one more smoke break during a marathon study session. It smells like the gummy, self-satisfied leather of old book bindings and the grateful sinking feeling when you flop onto a broken-down Chesterfield sofa. Leather dominates New Sibet, but it is rounded out by notes of ash and carnation, iris and fur and moss, and it comes together to smell like a library not in the romantic Beauty and the Beast sense of a library, but the lived-in, slightly gross, sleep-deprived, buzzing all-too-human and really pretty rank smell of a college library. Old book smell made human is admittedly a little bit gross, in the way that even the fanciest college with the most prestigious pedigree, the most beautiful wrought iron gates and most gracious green quadrangles is still full of college students, and college students are inevitably kind of disgusting. But that human grossness is what’s missing from old book smell, and it’s what makes New Sibet into a wearable expression of old book smell. It smells not just like books but like their context, the people crowding in around them, the bodies sinking time as lived experience into the leather covers of old books through their human smell.

I’m not much of a perfume person. I am, however, a writing and smelling person. And I love the way Helena and Rachel write about smells.

Via Amanda Mae Meyncke, who adds:

This is the thing I’ve told more people about in 2017 than anything else — it is an utter delight. I never cared about perfume at ALL before this, and their writing is so good and so precise, so intelligent and so ACCURATE, that every suggestion they’ve made has smelled exactly like their descriptions — which are a mixture of memory, sense, history and more — and they’ve done the impossible, making this aloof-seeming shit absolutely accessible and delightful.

(This is also a relatively cheap, luxurious, happy-making hobby in the midst of perilous times.)

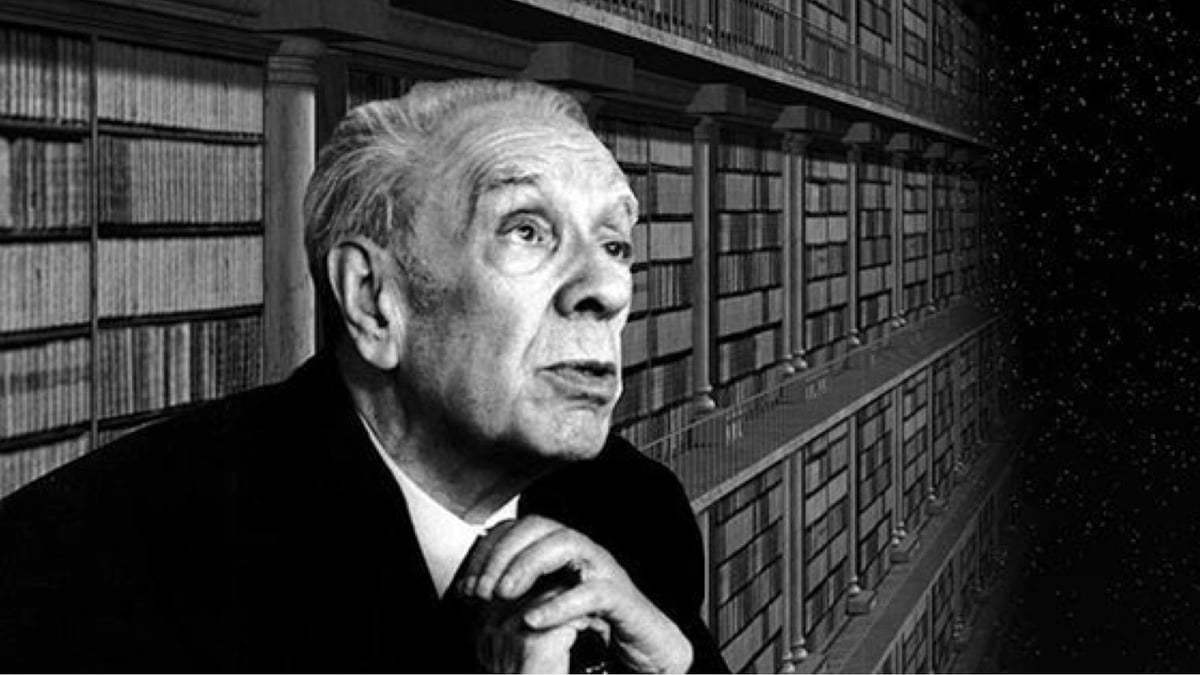

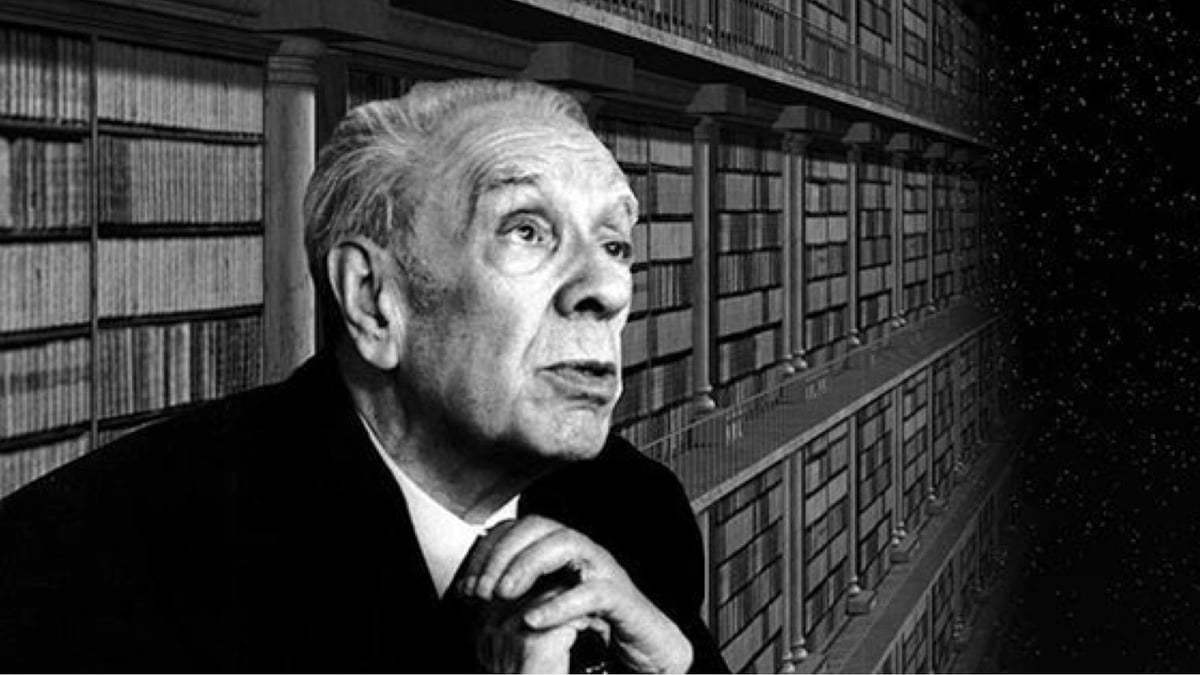

My favorite Jorge Luis Borges short story, “El Hacedor (The Maker),” is about the Greek poet Homer going blind:

He had never dwelled on memory’s delights. Impressions slid over him, vivid but ephemeral. A potter’s vermilion; the heavens laden with stars that were also gods; the moon, from which a lion had fallen; the slick feel of marble beneath slow sensitive fingertips; the taste of wild boar meat, eagerly torn by his white teeth; a Phoenician word; the black shadow a lance casts on yellow sand; the nearness of the sea or of a woman; a heavy wine, its roughness cut by honey-these could fill his soul completely. He knew what terror was, but he also knew anger and rage, and once he had been the first to scale an enemy wall. Eager, curious, casual, with no other law than fulfillment and the immediate indifference that ensues, he walked the varied earth and saw, on one seashore or another, the cities of men and their palaces. In crowded marketplaces or at the foot of a mountain whose uncertain peak might be inhabited by satyrs, he had listened to complicated tales which he accepted, as he accepted reality, without asking whether they were true or false.

Gradually now the beautiful universe was slipping away from him. A stubborn mist erased the outline of his hand, the night was no longer peopled by stars, the earth beneath his feet was unsure. Everything was growing distant and blurred. When he knew he was going blind he cried out; stoic modesty had not yet been invented and Hector could flee with impunity. I will not see again, he felt, either the sky filled with mythical dread, or this face that the years will transform. Over this desperation of his flesh passed days and nights. But one morning he awoke; he looked, no longer alarmed, at the dim things that surrounded him; and inexplicably he sensed, as one recognizes a tune or a voice, that now it was over and he had faced it, with fear but also with joy, hope, and curiosity. Then he descended into his memory, which seemed to him endless, and up from that vertigo he succeeded in bringing forth a forgotten recollection that shone like a coin under the rain, perhaps because he had never looked at it, unless in a dream.

Borges also gradually went blind, as had many members of his family. “The world of the blind is not the night that people imagine,” he said in a 1977 lecture called “Blindness,” collected in his magnificent posthumous anthology Selected Nonfictions. “I should say that I am speaking for myself, and for my father and my grandmother, who both died blind—blind, laughing, and brave, as I also hope to die. They inherited many things—blindness, for example—but one does not inherit courage.”

This lecture is the source of a famous Borges quote: “I had always imagined Paradise as a kind of library.” It does not usually include the context: Borges had been appointed as director of Argentina’s national library almost exactly when he became so blind he could no longer read.

No one should read self-pity or reproach

into this statement of the majesty of God;

who with such splendid irony

granted me books and blindness at one touch.

In his lecture, Borges discusses his own blindness, as well as that of Homer, Joyce, his predecessor at the library Paul Groussac, and a number of other writers. One of its most striking passages concerns John Milton, about whom Borges writes relatively little elsewhere. Milton, we’re told, went blind voluntarily, writing pamphlets in poor lighting to support the execution of Charles I, which landed him in political trouble after the Restoration. Borges likewise resisted, and survived Peron’s rule in Argentina.

Borges is especially struck by Milton’s Samson Agonistes, which is also about a blind hero who strikes down a wicked king.

He wanted to create a Greek tragedy. The action takes place in a single day, Samson’s last. Milton thought on the similarity of destinies, since he, like Samson, had been a strong man who was ultimately defeated. He was blind. And he wrote those verses which, according to Landor, he punctuated badly, but which in fact had to be “Eyeless, in Gaza, at the mill, with the slaves” — as if the misfortunes were accumulating on Samson.

Milton has a sonnet in which he speaks of his blindness. There is a line one can tell was written by a blind man. When he has to describe the world, he says, “In this dark world and wide.” It is precisely the world of the blind when they are alone, walking with hands outstretched, searching for props. Here we have an example — much more important than mine — of a man who overcomes blindness and does his work: Paradise Lost, Paradise Regained, Samson Agonistes, his best sonnets, part of A History of England, from the beginnings to the Norman Conquest. All of this was executed while he was blind; all of it had to be dictated to casual visitors.

Here we return to Borges’s “The Maker”:

Why did those memories come back to him, and why did they come without bitterness, as a mere foreshadowing of the present?

In grave amazement he understood. In this night too, in this night of his mortal eyes into this he was now descending, love and danger were again waiting. Ares and Aphrodite, for already he divined (already it encircled him) a murmur of glory and hexameters, a murmur of men defending a temple the gods will not save, and of black vessels searching the sea for a beloved isle, the murmur of the Odysseys and Iliads it was his destiny to sing and leave echoing concavely in the memory of man. These things we know, but not those that he felt when he descended into the last shade of all.

In De pueris instituendis (On the education of children, 1529), the great Renaissance humanist Erasmus, borrowing from the classical rhetoricians Horace and Quintillian, helped reintroduce an important idea:

I have now come to the stage of my argument where I shall briefly explain how love of study may be instilled in children - a subject which I have already touched upon in part. As I have said, through practice we acquire painlessly the ability to speak. The art of reading and writing comes next; this involves some tedium, which can be relieved, however, by an expert teacher who spices his instruction with pleasant inducements. One encounters children who toil and sweat endlessly before they can recognize and combine into words the letters of the alphabet and learn even the bare rudiments of grammar, yet who can readily grasp the higher forms of knowledge. As the ancients have demonstrated, there are artful means to overcome this slowness. Teachers of antiquity, for instance, would bake cookies of the sort that children like into the shape of letters, so that their pupils might, so to speak, hungrily eat their letters; for any student who could correctly identify a letter would be rewarded with it.

In grad school, I worked with a British literary historian who expertly broke this down into a post-psychoanalytic framework. Biscuits, like speech and writing, form a circuit between the eyes, hand, and mouth. The regulation of desire clears the way for the discipline of discourse. Like Plato, we move from the immanent and particular to the abstract and universal, but this is always mediated by the body, whose conflicting drives trouble these ideal categories.

I responded: “It’s Cookie Monster.” Growing up in England, he’d never heard of him.

Cookie’s idiosyncratic pronouns and truncated consonant clusters are a ruse. He’s easily the most verbally adept, best-educated character on Sesame Street. He teaches children the alphabet and vocabulary, and of course doubles as Alistaire Cookie on Monsterpiece Theatre. The growly voice, googly eyes, and outsized yearnings mask the heart of a scholar.

I bet he used to be a graduate student. You can’t show any of us free food without us reacting like this.

He even loves absurdist metafiction:

Cookie is all of us who always get underestimated, just because we refused to always change how we talk and how we act because we went to school. But we love those sweet leatherbound books, too.

NOTE: Cookie Monster was invited and was originally slated to collaborate on this post. He was excited; I was excited. Unfortunately, due to a scheduling conflict, he wasn’t able to appear. You have his and my regrets. (I swear on Mr. Snuffleupagus: All of this is 100 percent true.)

Adam Phillips is a writer and psychoanalyst, working on (among other things) a digressive, deflationary biography of Freud. He recently gave an “Art of Nonfiction” interview to The Paris Review which is one of those great Paris Review interviews about writing and life and approaching the universe.

PHILLIPS: Analysis should do two things that are linked together. It should be about the recovery of appetite, and the need not to know yourself. And these two things—

INTERVIEWER: The need not to know yourself?

PHILLIPS: The need not to know yourself. Symptoms are forms of self-knowledge. When you think, I’m agoraphobic, I’m a shy person, whatever it may be, these are forms of self-knowledge. What psychoanalysis, at its best, does is cure you of your self-knowledge. And of your wish to know yourself in that coherent, narrative way…

I was a child psychotherapist for most of my professional life. One of the things that is interesting about children is how much appetite they have. How much appetite they have—but also how conflicted they can be about their appetites. Anybody who’s got young children, or has had them, or was once a young child, will remember that children are incredibly picky about their food. They can go through periods where they will only have an orange peeled in a certain way. Or milk in a certain cup.

INTERVIEWER: And what does that mean?

PHILLIPS: Well, it means different things for different children. One of the things it means is there’s something very frightening about one’s appetite. So that one is trying to contain a voraciousness in a very specific, limiting, narrowed way. It’s as though, were the child not to have the milk in that cup, it would be a catastrophe. And the child is right. It would be a catastrophe, because that specific way, that habit, contains what is felt to be a very fearful appetite. An appetite is fearful because it connects you with the world in very unpredictable ways. Winnicott says somewhere that health is much more difficult to deal with than disease. And he’s right, I think, in the sense that everybody is dealing with how much of their own aliveness they can bear and how much they need to anesthetize themselves.

We all have self-cures for strong feeling. Then the self-cure becomes a problem, in the obvious sense that the problem of the alcoholic is not alcohol but sobriety. Drinking becomes a problem, but actually the problem is what’s being cured by the alcohol. By the time we’re adults, we’ve all become alcoholics. That’s to say, we’ve all evolved ways of deadening certain feelings and thoughts. One of the reasons we admire or like art, if we do, is that it reopens us in some sense—as Kafka wrote in a letter, art breaks the sea that’s frozen inside us. It reminds us of sensitivities that we might have lost at some cost.

Here is my third installment in pulling down random books from my shelves and writing about them, under the belief that the internet is better when not all of it comes from the internet.

Eugen Weber is a wonderful, sassy cultural historian. His best-known book is probably Peasants Into Frenchmen: The Modernization of Rural France, 1880-1914.

When I first moved to Philadelphia, one of my favorite things about staying up too late was catching episodes of his documentary series The Western Tradition on PBS at 3 AM. (Now you can stream the whole series free at Learner.org, which I just found out today.)

This is a passage from France Fin de Siecle, a really terrific book about art, culture, and literature in mid-to-late 19th-century France. And I swear to God, I think about this particular section all the time.

If one considers the scarceness of water and of facilities for its evacuation, it is not surprising that washing was rare and bathing rarer. Clean linen long remained an exceptional luxury, even among the middle classes. Better-off buildings enjoyed a single pump or tap in the courtyard. Getting water above the ground floor was rare and costly; in Nevers it became available on upper floors in the 1930s. Those who enjoyed it sooner, as in Paris, fared little better.

Baths especially were reserved for those with enough servants to bring the tub and fill it, then carry away the tub and dirty water. Balzac had referred to the charm of rich young women when they came out of their bath. Manuals of civility suggest that this would take place once a month, and it seems that ladies who actually took the plunge might soak for hours: an 1867 painting by Alfred Stevens shows a plump young blonde in a camisole dreaming in her bathtub, equipped with book, flowers, bracelet, and a jeweled watch in the soap-dish. Symbols of wealth and conspicuous consumption.

In a public lecture course Vacher de Lapouge affirmed that in France most women die without having once taken a bath. The same could be said of men, except for those exposed to military service. No wonder pretty ladies carried posies: everyone smelled and, often, so did they.

Teeth were seldom brushed and often bad. Only a few people in the 1890s used toothpowder, and toothbrushes were rarer than watches. Dentists too were rare: largely an American import, and one of the few such things the French never complained about. Because dentists were few and expensive, one would find lots of caries, with their train of infections and stomach troubles, it is likely that most heroes and heroines of nineteenth-century fiction had bad breath, like their real-life models.

Yep. That’s why we call them “the unwashed masses.”

It wasn’t until the twentieth century that most people took a bath, washed their underwear, flushed a toilet, saw their own reflection in a mirror, or stopped dying at atrocious rates every time they gave birth to a child. How’s that mistake looking now, Werner?

One thing I will be doing from time to time this week is pulling down random books from my shelves and writing about them, under the belief that the internet is better when not all of it comes from the internet. Here’s the first installment.

According to tradition, Simonides of Keos was the first Greek poet who composed and sung poems for money, rather than being kept by a patron. He was also famously stingy and liked to pose riddles:

They say that Simonides had two boxes, one for favors, the other for fees. So when someone came to him asking for a favor he had the boxes displayed and opened: the one was found to be empty of graces, the other full of money. And that’s the way Simonides got rid of a person requesting a gift.

Simonides’s world was one where old relationships of gift-exchange and patronage were breaking down in favor of what for Greeks was a fairly new invention, coinage. And all of his poetry and the stories around him seem to play with this: how the old world mythic heroes and Gods (Homer’s subjects) gave way to Olympic champions and rich merchants (Simonides’s subjects), the way value can be real but invisible, how words can be things that you exchange, like gifts or cash. This is one thing that helps make Simonides unusually modern.

That, at least, is poet/critic Anne Carson’s take in her terrific book Economy of the Unlost, which juxtaposes Simonides and the equally staggering twentieth-century poet Paul Celan:

Simonides of Keos was the smartest person in the fifth century B.C., or so I have come to believe. History has it that he was also the stingiest. Fantastical in its anecdoes, undeniable in its implications, the stinginess of Simonides can tell us something about the moral life of a user of money and something about the poetic life of an economy of loss.

No one who uses money is unchanged by that.

No one who uses money can easily get a look at their own practice. Ask eye to see its own eyelashes, as the Chinese proverb says. Yet Simonides did so, not only because he was smart.

Another argument Carson makes is that because Simonides was willing to write for anyone who would pay, his epigraphs — literally, writing that would be inscribed on a gravestone — is the “first poetry in the ancient Greek tradition about which we can certainly say, these are texts written to be read: literature” (emphasis mine).

I’ve always thought that passivity is underrated. One of the nice things about going to the movies is that once you’re there, everything just happens to you. In the seventies, film theory took on a lot of anti-consumerist and weirdly sexual politics where, for some reason, it was better to be active than passive, which always just feels very vanilla. I mean, sometimes you’re active, sometimes you’re passive, and sometimes you’re just playing around, which isn’t really either, but all three are good.

You could apply this three-part scheme to a lot of things (three-part schemes are good for that), but I thought of it reading this 1997 essay “The Book and the Labyrinth,” on cybertexts and literature that, like a lot of games we’re familiar with, cycles through a variety of different architectural possibilities.

The author, Espen J. Aarseth, gives three predigital historical examples of this kind of literature: the I Ching, which is literally random, like throwing dice; Apollinaire’s Caligrammes, which contains more like concrete poetry that can be read in multiple (or sometimes just unexpected) directions (there are plenty of “traditional” free verse poems in that book, too); and Raymond Queneau’s Cent Mille Milliards de Poemes (One Hundred Thousand Billion Poems) — which sounds about as right in French as “a million billion trillion dollars” does in English — ten sonnets printed on cards with each line on a separated strip, where all the lines can be recombined to produce new sonnets in any sequence.

Whitney Anne Trettien calls these “text-generating mechanisms,” and her thesis (Computers, Cut-ups & Combinatory Volvelles) offers much more history on this kind of literary play, while also being an excellent example of what Aarseth would call a cybertext.

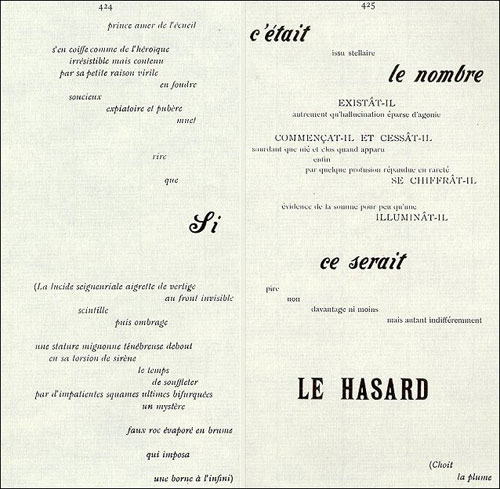

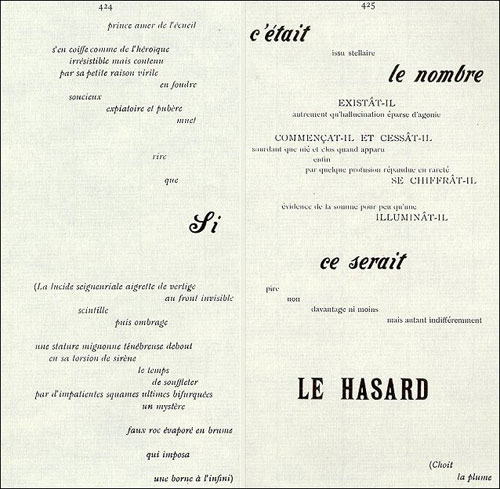

My two favorites, however, are both by French poet Stephane Mallarme. (The French love these things almost as much as they love their accented vowels.) This is an image from his poem “Un coup de des jamais n’abolira le hasard” or “A throw of the dice will never abolish chance”:

You can read the poem linearly (such as it is), but even to find its putative title, you have to skip words, finding a thread in different fonts and sizes. It’s the first (and maybe the last) truly avant-garde poem that uses space this way. And it never turned out exactly the way Mallarme wanted it.

He had precise instructions on the layout of the poem, he had precise instructions on the layout of negative space, he had precise instructions on the kind and quality of paper to be used, the type of binding, and so forth. This radical, go-in-any-direction-you-wish experiment was through-designed in a way that was impossible to fulfill.

It’s not a poem even as we would recognize it. It’s an architecture.

My other favorite example was called simply Le Livre (The Book), or sometimes The Great Work. We don’t even know its contents. All we have are unpublished notes that detail (in part) its physical arrangement and rearrangement, storage, where the reader of the book would stand in relationship to the audience while a reading was performed (yes, this book would be performed), how much money would be charged, how many performances could occur in each day, etc., etc., etc.

It’s like having detailed instructions for the proper handling of the ark of the covenant, and no ark. And it wasn’t lost — there never was one.

And that may be where we are. The only way to abolish chance — to create the space for action, audience, and game simultaneously — may be to create a structure with nothing inside, no mistakes to be made because there is nothing for them on which to be made, “a labyrinth with no center” (which is what Borges called the “metaphysical detective story” Citizen Kane).

Mallarme invented vaporware, the LOST questions that never get answered, the giant 404. It wasn’t his fault. It was supposed to be great.

In a letter to the editor in 1988, literary critic Eddie Dow tried to set the record straight:

In 1926 Fitzgerald published one of his finest stories, ”The Rich Boy,” whose narrator begins it with the words ”Let me tell you about the very rich. They are different from you and me.”

Ten years later, at lunch with his and Fitzgerald’s editor, Max Perkins, and the critic Mary Colum, Hemingway said, ”I am getting to know the rich.” To this Colum replied, ”The only difference between the rich and other people is that the rich have more money.” (A. Scott Berg reports this in ”Max Perkins, Editor of Genius.”) Hemingway, who knew a good put-down when he heard one and also the fictional uses to which it could be put, promptly recycled Colum’s remark in one of his best stories, with a revealing alteration: he replaced himself with Fitzgerald as the one put down. The central character in ”The Snows of Kilimanjaro” remembers ”poor Scott Fitzgerald and his romantic awe of [ the rich ] and how he had started a story once that began, ‘The very rich are different from you and me.’ And how someone had said to Scott, yes, they have more money.”

World-class athletes, though, really do seem to be different from you and me, and not just because they have better physical skills and (some of them) more money. We act shocked when athletes we think we understand, like LeBron James or Tiger Woods, surprise us with their behavior, or when a great player like Isiah Thomas degenerates into a complete lunatic once he’s off the court. (Sorry, Knicks fans.)

The strangeness (and unbelievable abilities) of top athletes is the theme of David Foster Wallace’s 1995 essay “The String Theory,” about the lower rungs of pro tennis:

Americans revere athletic excellence, competitive success, and it’s more than lip service we pay; we vote with our wallets. We’ll pay large sums to watch a truly great athlete; we’ll reward him with celebrity and adulation and will even go so far as to buy products and services he endorses.

But it’s better for us not to know the kinds of sacrifices the professional-grade athlete has made to get so very good at one particular thing. Oh, we’ll invoke lush cliches about the lonely heroism of Olympic athletes, the pain and analgesia of football, the early rising and hours of practice and restricted diets, the preflight celibacy, et cetera. But the actual facts of the sacrifices repel us when we see them: basketball geniuses who cannot read, sprinters who dope themselves, defensive tackles who shoot up with bovine hormones until they collapse or explode. We prefer not to consider closely the shockingly vapid and primitive comments uttered by athletes in postcontest interviews or to consider what impoverishments in one’s mental life would allow people actually to think the way great athletes seem to think. Note the way “up close and personal” profiles of professional athletes strain so hard to find evidence of a rounded human life — outside interests and activities, values beyond the sport. We ignore what’s obvious, that most of this straining is farce. It’s farce because the realities of top-level athletics today require an early and total commitment to one area of excellence. An ascetic focus. A subsumption of almost all other features of human life to one chosen talent and pursuit. A consent to live in a world that, like a child’s world, is very small.

This willful ignorance breaks down when 1) an athlete is sufficiently famous and dominant that we expect more from him, 2) an athlete suddenly fails to succeed, 3) an athlete allows those idiosyncrasies out more than is necessary, 4) an athlete’s competing in a sport that we don’t understand well or follow closely.

For instance, Michael Jordan is a great example of a top athlete who never broke character, whose talents never let us down (that stint with the Wizards being apocryphal, and best ignored), and won at the highest level in a widely followed sport. Yet by all accounts, he was a hypercompetitive, gambling-addicted sociopath. In The Book of Basketball, Bill Simmons offers my favorite take on Jordan:

Chuck Klosterman pointed this out on my podcast once: for whatever reason, we react to every after-the-fact story about Michael Jordan’s legendary competitiveness like it’s the coolest thing ever. He pistol-whipped Brad Sellers in the shower once? Awesome! He slipped a roofie into Barkley’s martini before Game 5 of the ‘93 Finals? Cunning! But really, Jordan’s competitiveness was pathological. He obsessed over winning to the point that it was creepy. He challenged teammates and antagonized them to the point that it became detrimental. Only during his last three Chicago years did he find an acceptable, Russell-like balance as a competitor, teammate, and person.

And still, nearly everyone agrees (and I do too) that this made Jordan the best basketball player, certainly better than Shaq and Wilt and (so far) LeBron, who just had different pathologies.

At Deadspin, Katie Baker takes this in a different direction, looking at ESPN’s 30 for 30 documentary on BMX and X-games legend Mat Hoffman:

[A] leprechaun-faced, sparkle-eyed freestyling daredevil who did things on sketchily self-constructed ramps in his tweenage backyard (“he’s this shady little kid from Oklahoma just blasting,” recalls one former pro from that era) that no one else in the sport had even conceived of. Hoffman was so instant a splash that in his first sanctioned competition he took first in the amateur bracket, turned pro on the spot, and then went on to win first place in that class as well. By the next day he had 15 sponsors lined up.

But while the retrospective into Hoffman’s game-changing theatrics appears on the surface a delish amuse-bouche for the X Games, it also may cause a few viewers to choke. He nails 900s, yes, but he also breaks over 300 bones. He flies high, but then he lays low. Like, in a coma-type low. As one friend of Hoffman says in the film, describing his jumps off an ever-heightening ramp: “It would go from this beautiful soaring thing to a violent crash so suddenly. We’d be like, ‘is he dead?’…

It’s easy to see films like these and lament the death-defying choices of men who have families and children, to judge them harshly for their inability to say no, but I wonder sometimes what the alternative is. Some people are simply hard-wired this way. (It’s almost too perfect that Hoffman had a dear friendship with Evel Knievel.)

Tony Hawk understands, saying: “That’s who we are! We love it too much to hang it up. I hate when people ask me that: ‘When are you hanging it up?’ Like, if I’m standing on my own two feet? I’m riding a skateboard.”

You can’t watch the footage of Hoffman as a young kid and not see that he’s different, that he can’t not do these things. “I just kick my feet,” he tells one professional rider who asks how he pulls off an impossible move, sounding like some kind of Will Hunting savant. He talks about lying in bed dreaming about how to build higher ramps. “That’s the fabric of who Mat is,” says one friend. Who are we to tell him to change?

Add in the fact that Hoffman suffered his most life-threatening injuries trying to perform for TV audiences for ESPN and The Wide Word of Sports, and it’s hard to see exactly what the difference is between him and football players or boxers suffering one concussion (or some other major injury) after another, sometimes dying on the field or in the ring, in far too many cases dying too young.

The one difference between Hoffman and the others is that he didn’t make fans feel betrayed by a celebrity like LeBron, he wasn’t easily ignored like Wallace’s low-level tennis pros fighting it out in the qualies just to make a living, and he didn’t entertain a gigantic audience for more than a decade like Michael Jordan or Muhammed Ali.

We are all witnesses.

Update: Reader Nick pointed out that the first version of this post implied that Hoffman’s career was significantly shorter than Jordan’s or Ali’s; the contrast I was trying to draw was between the allowances most of us make for athletes in “major” sports versus those in “extreme” competition, especially when the former are just as dangerous and personality-specific as the latter, if not more so.

Socials & More